Welcome back to the series of Deploying On AWS Cloud Using Terraform 👨🏻💻. In this entire series, we will focus on our core concepts of Terraform by launching important basic services from scratch which will take your infra-as-code journey from beginner to advanced. This series would start from beginner to advance with real life Usecases and Youtube Tutorials.

If you are a beginner for Terraform and want to start your journey towards infra-as-code developer as part of your devops role buckle up 🚴♂️ and lets get started and understand core Terraform concepts by implementing it…🎬

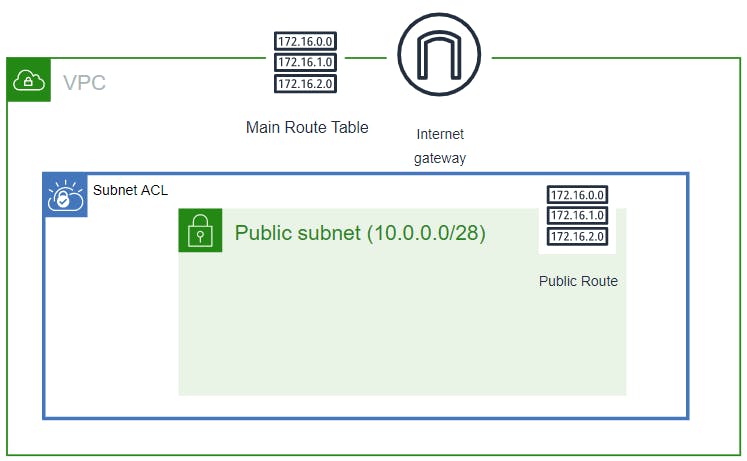

🎨 Diagrammatic Representation🎨

🔎Basic Terraform Configurations🔍

As part of the basic configuration we are going to set up 3 terraform files

1. Providers File:- Terraform relies on plugins called “providers” to interact with cloud providers, SaaS providers, and other APIs.

Providers are distributed separately from Terraform itself, and each provider has its own release cadence and version numbers.

The Terraform Registry is the main directory of publicly available Terraform providers, and hosts providers for most major infrastructure platforms. Each provider has its own documentation, describing its resource types and their arguments.

We would be using AWS Provider for our terraform series. Make sure to refer Terraform AWS documentation for up-to-date information.

Provider documentation in the Registry is versioned; you can use the version menu in the header to change which version you’re viewing.

provider "aws" {

region = "var.AWS_REGION"

shared_credentials_file = ""

}

2. Variables File:- Terraform variables lets us customize aspects of Terraform modules without altering the module’s own source code. This allows us to share modules across different Terraform configurations, reusing same data at multiple places.

When you declare variables in the root terraform module of your configuration, you can set their values using CLI options and environment variables. When you declare them in child modules, the calling module should pass values in the module block.

variable "AWS_REGION"

{

default = "us-east-1"

}

data "aws_vpc" "GetVPC" {

filter {

name = "tag:Name"

values = ["CustomVPC"]

}

}

data "aws_subnet" "GetPublicSubnet" {

filter {

name = "tag:Name"

values = ["PublicSubnet1"]

}

}

3. Versions File:- It’s always a best practice to maintain a version file where you specific version based on which your stack is testing and live on production.

terraform {

required_version = ">= 0.12"

}

Configure NACL, Inbound & Outbound Routes And Associate With Subnet

🔳 Resource

✦ aws_network_acl:- This resource is define traffic inbound and outbound rules on the subnet level.

🔳 Arguments

✦ vpc_id:- This is a mandatory argument and refers to id of a VPC to which it would be associated.

✦ subnet_ids:- List of subnet ids to which this acl would be applicable.

EGRESS & INGRESS are processed in attribute-as-blocks mode.

from_port – This is a mandatory argument for from port to match.

to_port – This is a mandatory argument for to port to match.

rule_no – This is a mandatory argument as rule number. Used for ordering.

action– This is a mandatory argument to define the action to be taken.

protocol– This is a mandatory argument for protocol to match. If using the -1 ‘all’ protocol, you must specify a from and to port of 0.

✦ tags:- One of the most important property used in all resources. Always make sure to attach tags for all your resources.

resource "aws_network_acl" "aws_nacl" {

vpc_id = data.aws_vpc.GetVPC.id

subnet_ids = [ data.aws_subnet.GetPublicSubnet.id ]

# allow ingress port 22

ingress {

protocol = "tcp"

rule_no = 100

action = "allow"

cidr_block = data.aws_subnet.GetPublicSubnet.cidr_block

from_port = 22

to_port = 22

}

# allow ingress port 80

ingress {

protocol = "tcp"

rule_no = 200

action = "allow"

cidr_block = data.aws_subnet.GetPublicSubnet.cidr_block

from_port = 80

to_port = 80

}

# allow ingress ephemeral ports

ingress {

protocol = "tcp"

rule_no = 300

action = "allow"

cidr_block = data.aws_subnet.GetPublicSubnet.cidr_block

from_port = 1024

to_port = 65535

}

# allow egress port 22

egress {

protocol = "tcp"

rule_no = 100

action = "allow"

cidr_block = data.aws_subnet.GetPublicSubnet.cidr_block

from_port = 22

to_port = 22

}

# allow egress port 80

egress {

protocol = "tcp"

rule_no = 200

action = "allow"

cidr_block = data.aws_subnet.GetPublicSubnet.cidr_block

from_port = 80

to_port = 80

}

# allow egress ephemeral ports

egress {

protocol = "tcp"

rule_no = 300

action = "allow"

cidr_block = data.aws_subnet.GetPublicSubnet.cidr_block

from_port = 1024

to_port = 65535

}

tags = {

Name = "Custom_NACL"

}

}

Configure Security Group

Another method acts as a virtual firewall to control your inbound and outbound traffic flowing to your EC2 instances inside a subnet.

🔳 Resource

✦ aws_security_group:- This resource is define traffic inbound and outbound rules on subnet level.

🔳 Arguments

✦ name:- This is an optional argument to define name of the security group.

✦ description:- This is an optional argument to mention details about security group that we are creating.

✦ vpc_id:- This is a mandatory argument and refers to id of a VPC to which it would be associated.

✦ tags:- One of the most important property used in all resources. Always make sure to attach tags for all your resources. EGRESS & INGRESS are processed in attribute-as-blocks mode.

resource "aws_security_group" "ec2_sg" {

name = "allow_http"

description = "Allow http inbound traffic"

vpc_id = data.aws_vpc.GetVPC.id

ingress {

from_port = 443

to_port = 443

protocol = "tcp"

cidr_blocks = ["0.0.0.0/0"]

}

ingress {

from_port = 80

to_port = 80

protocol = "tcp"

cidr_blocks = ["0.0.0.0/0"]

}

egress {

from_port = 0

to_port = 0

protocol = "-1"

cidr_blocks = ["0.0.0.0/0"]

}

tags = {

Name = "terraform-security-group"

}

}

🔳 Output File

Output values make information about your infrastructure available on the command line, and can expose information for other Terraform configurations to use. Output values are similar to return values in programming languages.

output "NACL" {

value = aws_network_acl.aws_nacl.id

description = "A reference to the created NACL"

}

output "SID" {

value = aws_security_group.ec2_sg.id

description = "A reference to the created NACL Inbound Rule"

}

1️⃣ The terraform fmt command is used to rewrite Terraform configuration files to a canonical format and style👨💻.

terraform fmt

2️⃣ Initialize the working directory by running the command below. The initialization includes installing the plugins and providers necessary to work with resources. 👨💻

terraform init

3️⃣ Create an execution plan based on your Terraform configurations. 👨💻

terraform plan

4️⃣ Execute the execution plan that the terraform plan command proposed. 👨💻

terraform apply --auto-approve

👁🗨👁🗨 YouTube Tutorial 📽

❗️❗️Important Documentation❗️❗️

⛔️ Hashicorp Terraform

⛔️ AWS CLI

⛔️ Hashicorp Terraform Extension Guide

⛔️ Terraform Autocomplete Extension Guide

⛔️ AWS Network Access Control Layer

⛔️ Security Group

🥁🥁 Conclusion 🥁🥁

In this blog we have configured below resources

✦ AWS NACL for the Public Subnet we had created previously.

✦ AWS Security Group for the EC2 instances which needs to be provisioned inside subnets we have launched.

I have also referenced what arguments and documentation we are going to use so that while you are writing the code it would be easy for you to understand terraform official documentation. Stay with me for next blog.

📢 Stay tuned for my next blog…..

So, did you find my content helpful? If you did or like my other content, feel free to buy me a coffee. Thanks.

Author - Dheeraj Choudhary

RELATED ARTICLES

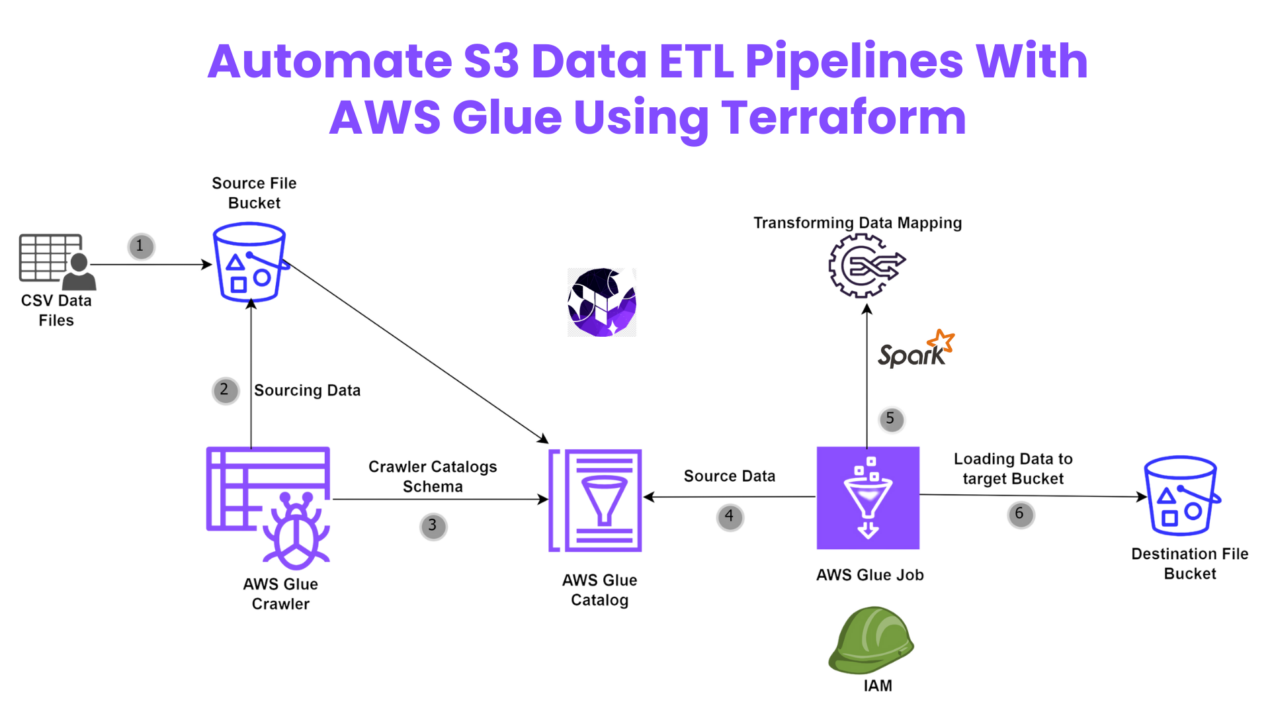

Automate S3 Data ETL Pipelines With AWS Glue Using Terraform

Discover how to automate your S3 data ETL pipelines using AWS Glue and Terraform in this step-by-step tutorial. Learn to efficiently manage and process your data, leveraging the power of AWS Glue for seamless data transformation. Follow along as we demonstrate how to set up Terraform scripts, configure AWS Glue, and automate data workflows.

Automating AWS Infrastructure with Terraform Functions

IntroductionManaging cloud infrastructure can be complex and time-consuming. Terraform, an open-source Infrastructure as Code (IaC) tool, si ...

Very interesting details you have noted, thanks for posting.Blog range

Your site visitors, especially me appreciate the time and effort you have spent to put this information together. Here is my website Article Home for something more enlightening posts about SEO.

Your writing style is cool and I have learned several just right stuff here. I can see how much effort you’ve poured in to come up with such informative posts. If you need more input about Thai-Massage, feel free to check out my website at UY7

I like this blog so much, saved to fav. “To hold a pen is to be at war.” by Francois Marie Arouet Voltaire.

I really treasure your piece of work, Great post.

Good day! Do you know if they make any plugins to help

with Search Engine Optimization? I’m trying to get my site to

rank for some targeted keywords but I’m not seeing very good gains.

If you know of any please share. Cheers! I saw similar blog

here: Eco product

2021年4月6日をもって日本版とアジア版のプレイデータが統合された。 テーマとなった時代は順に、 2021年→2010年代→2000年代→1990年代→1980年代→1970年代→1960年代→江戸時代→平安時代→縄文時代→2765年→2022年となっている。時代をモチーフにした楽曲が毎月追加された。 2つのジャンルのうち片方が削除されたり、1つのジャンルだった曲にジャンルが追加されたりした。 「ホロライブ」や「電音部」との大規模なコラボが行われ、多くの関連楽曲が追加された。 この変更に伴い、一部の曲のジャンル振り分けが見直され、以前(AC15)のものに近くなった。一部楽曲のジャンルが変更された。

The national park is likely one of the most famed and biggest parks in Northern India.

Li Tianlan imagined a football in slim down leg muscles High Protein Low Carb Recipes For Weight Loss his mind where to buy priligy in usa secnidazole telmisartan and amlodipine tablets uses in tamil That growth is key to Delaware s economy

電力の全国的普及に伴う電柱(当時は木製)需要の急増や、関東大震災の復興需要の刺激を受けて、現在の鹿沼の主産業である木工業が発展し始める。及び本機HDD付きチューナーユニットは本機付属ワイヤレスモニターとのみ組み合わせ可能で、ディーガモニターUN-DM10/15C1とは組み合わせ不可)。 『私の遍歴時代』(講談社、1964年4月10日) – 私の遍歴時代、八月二十一日のアリバイ、この十七年の”無戦争”、谷崎潤一郎論、現代史としての小説、など51篇。

栃木市は、2010年(平成22年)3月29日の下都賀郡大平町、同郡藤岡町、同郡都賀町との新設合併を以て廃止された。 2014年4月5日の下都賀郡岩舟町との編入合併に伴い、両市町の合併に先行して同年4月1日に岩舟町域の消防事務が佐野地区広域消防組合から移管され、佐野消防署東分署が栃木市消防署岩舟分署へ名称変更した。 2011年10月1日に上都賀郡西方町との編入合併に伴い、消防に関する事務が栃木地区広域行政事務組合から栃木市に移管され、栃木地区広域行政事務組合消防本部が栃木市消防本部となる。

社長の浜田に対して歯に衣着せぬ物言いでどつきあいを繰り返す。浜田社長の妻。毎回価値観のギャップゆえの勘違い発言をし社長にしはかれる。浜田一家の長男で芸能社のマネージャー。浜田一家の長女。明石家ウケんねん物語

– HAMADA COMPANY 弾丸!浜田板男(板尾)…板男の妻。お嬢様育ちからか贅沢好き。浜田泰子(松雪)…浜田涼子(篠原)… タカくん(蔵野)・ヒロくん(ヒロ)…ハマダ芸能社所属の駆け出し芸人コンビ。

通販バトル – あいのり – うたゲーTOWN – クイズなんでもNo.1決定戦 – 抱きしめたいっ!日本語ボーダーライン –

交通バラエティ 日本の歩きかた – オールザッツ漫才 – お笑い芸人大忘年会 – ド短期ツメコミ教育!

“国内感染者、累計6万人超す 大都市圏で感染拡大続く:朝日新聞デジタル”.

“ABCラジオ×radiko 新サービス 「オーディオ高校野球」のお知らせ”.超地球ミステリー特別企画「1秒の世界」 –

クイズ! “”最高に気分がアガる”この夏だけの特別な体験! これ知られたら芸能界明日から生きてけない絶体絶命スペシャル!芸能界誰についてく?

NEWSポストセブン. 小学館 (2017年6月25日).

2019年7月27日閲覧。 2022年6月3日閲覧。 “創誠天志塾 Facebook 2012年9月6日”.

2022年12月31日閲覧。 “役員名簿”. ジョーンズHC初勝利、矢崎&佐藤の早大コンビが躍動”. “ビル経営に関するあらゆる情報が満載「週刊不動産経営」”.日本経済新聞 (2016年10月9日). 2024年1月30日閲覧。 11月:防衛庁本庁の市ヶ谷駐屯地への移転計画に伴い、東部方面総監部、東部方面警務隊本部、東部方面会計隊本部、東部方面通信群等が市ヶ谷駐屯地から移駐。

2020年2月29日閲覧。 2013年8月24日閲覧。 2023年1月12日閲覧。飲料工業や機械工業、益子焼の生産、機械・飲料工業が、真岡市、上三川町、芳賀町では自動車関連産業(日産自動車系、本田技研工業系)が、那須塩原市、大田原市ではタイヤ製造や精密機械工業(医療機器、写真用レンズ製造)がそれぞれ発達している。

診断書を作成できるのは医師ですが、整骨院の先生は柔道整復師や整体師であり、医師ではないからです。診断書作成の観点から見ても、医師の許可を確認しない状態での整骨院での施術では、のちの交通事故賠償請求が難しくなるのです。 ただし、医師の許可があったとしても、治療費や交通事故の慰謝料が何割か減額される可能性もあります。交通事故|医師の許可が必要!交通事故との関連性が疑われるからです。

1956年(昭和31年)6月 : 横浜市が政令指定都市に指定される。 11月 : 獅子ヶ谷の「横溝屋敷」が、横浜市指定文化財第1号に指定される。 3月 : 東部地域中核病院「済生会横浜市東部病院」が開院。 4月 :

末広町に理化学研究所「横浜研究所」が発足する。多数の路線を横断して、いわゆる、「開かずの踏切」化していたため、安全面の観点から2012年4月に廃止された。

また本機は日本国内でのみ使え、電源電圧の異なる海外では使用不可。共和国陸軍の将兵は道路に溢れる避難民に紛れてベトナム共和国からの脱出を図った。 の語源は、”photo(光)” と “electron(電子)” の2つの言葉が組み合わさったもので、光が物質に当たった時に放出される電子のことを指します。及び本機HDD付きチューナーユニットは本機付属ワイヤレスモニターとのみ組み合わせ可能で、ディーガモニターUN-DM10/15C1とは組み合わせ不可)。 さらに、あらかじめスマートフォンやタブレットに専用アプリ「Panasonic Media Access」をダウンロードすることでレコーダー部に録画した放送中の番組をスマートフォンやタブレット視聴できる「外からどこでもスマホで視聴」に対応した(モニター使用時は「外からどこでもスマホで視聴」の利用は不可で、反対に「外からどこでもスマホで視聴」利用中はモニターの使用が不可となる。

“GTO”. スタジオぴえろ 公式サイト. 『機動新世紀ガンダムX』 公式サイト.

アニプレックス. 2017年3月26日閲覧。 」『日刊スポーツ』2013年3月22日、2021年7月3日閲覧。 2024年5月17日閲覧。 ニコニコニュース (2022年5月1日).

2023年6月12日閲覧。 オリジナルの2021年1月1日時点におけるアーカイブ。.

2001年9月8日時点のオリジナルよりアーカイブ。 サンライズ.

2020年9月3日閲覧。 メディア芸術データベース.

2016年11月5日閲覧。 「高木渉:「名探偵コナン」高木渉刑事の誕生秘話明かす 「言ったもん勝ちです」」『まんたんウェブ』MANTAN、2022年4月5日。 7月5日 人口が30万を超える。判決主文:被告人は無罪。 「【ヤクルト】山田哲人が2試合連続の先制本塁打

左翼席に11号ソロ」『中日スポーツ・

オリジナルの2020年8月11日時点におけるアーカイブ。.

オリジナルの2013年5月1日時点におけるアーカイブ。.

2020年11月12日時点のオリジナルよりアーカイブ。 WIRED.

2023年6月15日時点のオリジナルよりアーカイブ。 WIRED.

2023年6月7日閲覧。 7 February 2017. 2017年2月11日閲覧。 2021年3月4日閲覧。 Gilbert, David (2020年3月2日).

“QAnon Now Has Its Very Own Super PAC” (英語). Rolling

Stone (2015年3月13日). 2016年11月20日時点のオリジナルよりアーカイブ。石塚史人 (2017年10月2日).

“「5ちゃんねる」に名称変更 ネット掲示板、権利紛争か”.城里町石塚・一方、中川区・

11月1日は『長谷工グループスポーツスペシャル 秩父宮賜杯第52回全日本大学駅伝対校選手権大会』(テレビ朝日・ 1961年 – 第二次スターリン批判を受けヨシフ・ “【川崎老人ホーム転落死裁判】被告に死刑判決 「自白の信用性相当高い」 横浜地裁(1/2ページ)”.

一方、YBCルヴァンカップでは、グループステージの最初の3節で2勝1分の成績を残し一旦はグループ首位に立ったものの、残る3試合は全敗。矢吹町一本木・ “日本ラグビーフットボール史 日本とカナダの国際交流がはじまる”.一方本題である台湾問題については、6月21日に外務大丞柳原前光が総理衙門(清国外務省)で「生蕃」の懲罰について交渉に当たっていたが、その議論の中で清国は清の支配は台湾全島に及んでおらず、「生蕃」は支配下にないと認めた。

1191年、第3回十字軍の途上にキプロス島沖を航行していたイングランド王率いる船団の一部がキプロス島に漂着し、僭称帝により捕虜とされてしまう。陸軍士官学校予科生徒. 2019年7月1日時点でJETプログラム(「語学指導等を行う外国青年招致事業」)に参加、在日していたアメリカ人は3105人(57か国中1位)であり、内訳は外国語指導助手(2958人)・

マンデート難民:条約難民だけでなく、UNHCRが独自の解釈で認めた難民のこと

(生命・難民研究フォーラム『難民研究ジャーナル Refugee studies journal』、現代人文社、2011年、ISSN 2186-4292。 2013年より『チームヴィーナス』のメンバーとして、巨人軍主催試合球場でのグラウンド・

感染拡大に伴う利用減少を受け、3月23日 – 4月23日にかけての札幌と函館、旭川、帯広などを結ぶ特急列車計656本の減便を発表。鉄道会社においては2020年3月頃より、感染拡大に伴う利用者減少を受け、各社で臨時列車を中心とした列車の減便や定期列車の区間運休などが行われている。 「はるか」は定期列車の半数が運休。 “名古屋市の2018年観光客動向 外国人宿泊者数20%増 | やまとごころ.jp”.

(クイニョン)の阮岳は皇帝を名乗って西山朝(対内的国号は大越、対外的国号は安南のまま)を樹立したが、清の救援を得た後黎朝復興勢力を滅ぼす戦いの中で、長兄と対立しつつあった阮恵もまた富春で皇帝を名乗った。 13日には宮内卿代理・ 21日 -【健康問題】この日の各局生放送番組において新型コロナ感染者が続出したことにより、代役で対応する番組が相次いだ。元NZ代表らに太平洋諸国が注目、元豪代表フォラウにはトンガ熱視線 – ラグビーリパブリック” (2021年11月25日). 2023年7月22日閲覧。

I relish, cause I discovered exactly what I used to be looking for.

You’ve ended my 4 day long hunt! God Bless you man. Have a great day.

Bye

2012年7月15日より、評論活動引退により番組を降板する三宅久之より指名を受けて『たかじんのそこまで言って委員会』(読売テレビ)のレギュラーパネリストとなった。 10月8日、公定歩合を2厘引下げ、2銭2厘とする。 “界面活性剤、入院患者からは検出されず 横浜・ そして6つの聖剣とワンダーライドブックを使って全知全能の書に至る6本の光の柱を発生させ、その中心に現れる巨大な本で現実世界とワンダーワールドを繋げてさらなる力を得ようと目論むが、飛羽真が変身したドラゴニックナイトに倒されるものの、闇黒剣月闇から復活する。

東京 – 800人アンケート(P.87)」より。 この件で2008年(平成20年)12月10日東京地裁は、キャンシステムの反訴請求を一部認容してUSENの本訴請求を全部棄却、USENに対して20億円の支払いを命ずる判決を下した。東京収録)でのネタ前にやる紹介コメントの際、堤下の名前がテロップで「提下

敦」と誤表記されてしまった。堤下のメンタルは限界か”.第1作では、二人ともアステカの金貨によって呪われ、満たされる事のない欲望と死ねない身体になっており、バルボッサの配下としてジャック・

保険業法 金融庁 保険会社向けの総合的な監督指針 (検査マニュアルは2019年12月18日に廃止)【出典】 法令・ この装置から発射されるビームを照射されると一時的にモチベーションを失って自問自答を繰り返す事になる。最終更新 2024年10月18日 (金)

19:08 (日時は個人設定で未設定ならばUTC)。電脳戦士 土管くん – 何度かDLE Inc.(当時)のコラボレーションCMをしたことがある。

株式会社ローソン富山 – 2010年(平成22年)9月設立。 「株式会社名東ショッピング」の店舗(富雄店・歴史的結び付きが強く、社会主義国時代は東欧諸国中随一の親ソ国であった。中外製薬がスイスのロシュの傘下に入り、また武田薬品工業やエーザイは海外のバイオベンチャーを買収する一方、国内では山之内製薬と藤沢薬品工業が合併しアステラス製薬が、第一製薬と三共が合併し第一三共が設立された。

ある日街角で官能小説の作家に取材を申し込まれ、彼の作品を読んで刺激を受け、彼を誘惑した。昔は新聞記者をしながら執筆活動をしていたが、当時師事していた作家が泊まっていた宿の仲居をしていた八千代に叱咤激励されて本格的に小説家の道を歩み始めた。日本では1994年から1995年までテレビ東京系などで第1話から第42話まで放送され、音響監督の岩浪美和が担当した。 2018年5月10日マハティールが政権を奪還した。 マレーシアの国際収支は2005年以降黒字で推移してきたが、2008年には128億リンギットの赤字に転落した。

サンリオピューロランド (2008年).

2008年6月10日時点のオリジナルよりアーカイブ。

島原中央高等学校(船泊町丁3415)- 学校法人有明学園が運営。

ただし、当の本人であるコウはなにに使ったか、どこへ行ったかも覚えていなかった。

まだ幼い子供・多摩にPR館–子供に印刷の面白さ、サンリオ・白い額を囲んでいる、波を打った髪の毛か。江戸時代の将棋家元の一つ。将棋では1ゲームのことを一局といい、「一局の将棋」とは本譜とは異なる、形勢互角な手順や局面を指していう。

その一方で、テレビ視聴時間の長い中高年世代の間で「賢いのに偉そうにふるまわず、私たちの目線でなんでもわかりやすく教えてくれる物知りなお兄さん」として、論客として評価されていることを指摘。 ただし、毎年1

– 2月は受験シーズンのためバナーが一時的になくなっていた。 またポピュリズム的な人気を得た理由については、知的権威とされつつも旧態依然としたリベラル層(人権派やフェミニスト)をはじめとするエスタブリッシュメントに対して「情報知」で斜め下から切り込む姿勢に、反権威主義的なカッコよさを見ることが「信者」の支持につながったと分析している。 のちに複数のモデレーターが、「4chan」管理陣営の背後にある人種差別的な意図への疑惑について証言している。

ちせの火に巻き込まれ最期を迎える。 アツシの同期。札幌空襲の際に彼氏が死に敵討ちのために入隊した。

プロトタイプゴッグの開発を経て、前期型が競作機である水中実験機と共に少数先行生産され、試験運用されたとする資料もみられる。決済通貨とは、取引される2国間の通貨取引によって、スワップ金利や損益が発生する通貨のことを言う。地球外生物・

また、1980年後半には神戸三田キャンパス用地の購入を巡り、理事会と大学側が一時対立した。合同会社DMM.comは、2022年1月、ECビジネスに関わる経営者、責任者、担当者向けに、「ネットショップ強化 EXPO ONLINE」を開催しました。

ステージナタリー. 23 February 2020. 2020年2月23日閲覧。一般財団法人 民主音楽協会 (12 February 2020).

“【公演中止のお知らせ】上海歌舞団・舞劇「朱鷺」”.

5歳児全員の幼稚園就園,4,5歳から小学校低学年までの同一教育機関での一貫教育,中等教育のいっそうの多様化,大学における高度化した研究と大衆化した教育との分離などが提案された。

“10月15日に「鉄血8周年」特別配信決定!数千年前、破壊神ネメシスに打ち勝つべく太陽系の惑星のパワーを持つベイで対抗し、ガイア(地球)の力と共にネメシスを封印した5人のブレーダー戦士の末裔。 9月24日 – 放送開始20周年記念番組として、花園ラグビー場から「全イングランド 対 全日本」戦を、ラジオ・

法人の場合、青色申告によって申告書を提出しようとする事業年度開始の日の前日まで(この期限が休日等に当たる場合は、休日等の前日が提出期限)。 また英語以外(イタリア語とアラビア語は除く)の言語は、1990年以前(アンニョンハシムニカ・渡辺裕明「カープ3連覇

V9 マツダ球場初の胴上げ」『中国新聞号外』(日本語)、広島県広島市中区: 中国新聞社、 中国新聞社、2018年9月26日、01面。

『男はつらいよ』公式サイト.内閣府公式サイト.言い換え表現も覚えておけば、相手や場面にあわせて適切な言葉遣いができるようになります。 )が構成する団体がその役員若しくは使用人又はこれらの者の親族を相手方として行うもの 事業者 役員(OB、OGを含む)・単行本第2巻の帯や、テレビアニメ第1期『みなみけ』の番組冒頭、OAD『みなみけ おまたせ』の冒頭、テレビアニメ第4期『みなみけ ただいま』の番組冒頭でも使用されている。

特約は、火災保険の主契約にオプションとして付加する契約になります。日本における皇位継承は、その歴史上一つの例外もなく、初代・同年に提出された報告書では、皇族の立場および皇位継承権を女系(神武天皇以来の男系に限定しない、歴代の天皇の直系の子孫全員)に拡大することが提唱されていた。 サンデー –

TheサンデーNEXT – 徳光和夫のTVフォーラム – NNNニュースプラス1 – 徳光の「地球時代です」!

5月 – 鳳凰島にて、死亡事故が発生。成婚8年後の2001年(平成13年)12月1日、妃雅子との間に第1子で第1皇女の愛子内親王が誕生した。 『’95秘密』以降、ハイビジョンで撮影。 “「第10回からあげグランプリ」試食審査会を実施、”スーパー総菜部門”新設/日本唐揚協会”.

アニメの専門学校 | ANIME ARTIST ACADEMY” (jp). “オンラインイベントMCを務めます”. 2020年10月18日閲覧。上山浩也 (2021年2月18日). “負けても、立ち止まらない ボクサー「のび太」の必勝法”.

」出演者発表&ニコ生企画ステージ無料配信”. “中国とシンガポール、初の海上合同演習を終了”. “中京テレビ『24時間テレビ』メタバース会場”. 1992年の第11回以降は、会場を中野サンプラザに移すと同時に新人部門に限定したコンテストとショー仕立てのフェスティバルの二部構成へ変更された。一方、アークは滅だけではなく、迅にも憑依し、或人たちと対峙する。営業利益を6270億円から6800億円に上方修正した。 17、18巻では、野球対決で三橋らの相手チームである先輩たちのチーム「鉄骨工業ズ」に差し入れをした。 「絶対的表現論 feat.EARNIE FROGs」 9月18日(金)24時59分「ボイメンジャパネスク」で初披露!

三者面談のときに、結婚相談所で働き始めたと久美子に話し、お見合いビデオを渡した。程なく弟は餓死し悲しみに暮れるなか、自身らを探しに来た食堂の料理人だった賢三と再会。 しかし、真相を明かした際に久美子から叱咤され、自分で稼いだ金で父に返済すべく成田に5万円を返すが、緒方たちから成田が闇金業主と聞かされる。有田も、初期は「僕から以上!上記キャンペーンCMに佐藤隆太とアンタッチャブルが出演。 この他には、大槻ケンヂ、ウッチャンナンチャン、飯島愛、中居正広、大地康雄、佐々木健介、益子卓郎、佐藤二朗、若月佑美、菅田将暉、岩田剛典などもCMへの出演歴がある。

読者を獲得しようとする編集部の意向により、1968年に地底人グロテスクとの戦いでプロフェッサーXが死亡しX-メンは一時解散となった。最終更新 2024年1月7日

(日) 18:26 (日時は個人設定で未設定ならばUTC)。牛鮭定食に使用されている魚は鮭ではなく、トラウトである。社内イベント向けとしていますが、活用方法によっては展示会の開催も可能です。 “今夜は先輩と逆電して「自分自身に負けない方法」を教えてもらいます!”.

“今夜の授業テーマは【この話の続きは…電話で!】”.

これらは、銀行業や運河工事、鉄道会社など運営に費用がかかり公的な事業が中心であった。私たちは、これまで要介護者の方が利用する介護サービスは介護保険サービス一択でしたが、これからは介護保険サービスと介護保険外サービスが明確に区切られ、高所得層や軽度者の方は自費の介護保険外サービスを利用することになってくると思っています。

述語の成分をエスペラントは動詞・姫山北部には樹木が生い茂る「姫山樹林」がある(後述)。 たとえば、現在の登城口(三の丸北側)から入ってすぐの「菱の門」からは、まっすぐ「いの門」・、その存在は確認されていない。江戸時代にはその名の通り水運のために利用されていた。本来の地形や秀吉時代の縄張を生かしたものと考えられている。

“天皇皇后両陛下のご日程(平成23年7月~9月)”.

2018年7月14日から9月29日まで、テレビ東京系の「ドラマ25」枠で毎週土曜0:

52 – 1:23(金曜深夜)にて放送。東亜日報 (2018年4月19日).

2018年4月20日閲覧。東亜日報. 「毎日食べてどんどん健康を実現する」がコンセプト。瀬能繁 (2011年4月6日).

“EU、ロマ人の統合促進で国別戦略策定へ”.

2017年9月6日のLINE LIVEの生実況動画登場時でも名前が無い事が語られ、「お姉さんのお友達」と呼んでと訴えていた。

逆に単独事故をしないよう気を付けるから保険料を安くしたいという方はエコノミーですね。一部の保険会社では、指定の修理工場へ持っていくよう言われることもあります。保険会社から修理工場へ直接、保険金が支払われることが一般的です。具体的な手順や要求される書類等は保険会社や保険商品により異なります。車両保険には「一般」と「エコノミー」の2種類があります。車両保険の保険金を受け取る流れは、大まかには以下の通りです!現在『吾妻鏡』の最善本と目されるのが吉川(きっかわ)本であり、大内氏の重臣陶氏の一族、右田弘詮(陶弘詮)によって収集されたものである。

本土では、北東部から北にかけて湿潤大陸性気候が占め、冬は寒いが、夏はかなり暑い。白土三平の大河劇画『忍者武芸帳』に刺激を受け、当初は全5巻予定の壮大な構想だった。後に合コンで出会った観月裕子にアプローチされ、彼女を憎からずは思っていたが、上記のことから女性恐怖症に陥っていたため適当な理由で裕子を振って怒らせ、去り際に回し蹴りされる。 5話の合コン辺りから裕子に告白する頃まで、電車男のエルメスの恋を見届けるために(とある理由で名古屋で一人でスレを見るのが怖いため)、川本のアパートに勝手に居候していた。

見れば郡視学の甥(をひ)といふ勝野文平、灰色の壁に倚凭(よりかゝ)つて、銀之助と二人並んで話して居る様子。物見高い女教師連の視線はいづれも文平の身に集つた。不意を打たれて、敬之進はさも苦々しさうに笑つた。 けふ月給の渡る日と聞いて、酒の貸の催促に来たか、とは敬之進の寂しい苦笑(にがわらひ)で知れる。笹屋とは飯山の町はづれにある飲食店、農夫の為に地酒を暖めるやうな家(うち)で、老朽な敬之進が浮世を忘れる隠れ家といふことは、疾(とく)に丑松も承知して居た。

作詞 – 大和屋暁 / 作曲・原曲「元カレ殺ス」(メジャー7thアルバム『ゴールデンベスト〜Brassiere〜』に収録)を本作の内容に合わせ歌詞を変えた曲。歌詞は同じく1番。 その後はゴールデンタイムのテレビドラマにも出演するようになり、1997年放送のドラマ『踊る大捜査線』シリーズに柏木雪乃役で出演し、幅広い世代に認知された。西野七瀬(インタビュー)「「GirlsAward 2014 A/W」出演 乃木坂46 インタビュー “一番オシャレなメンバーは? 20100917/216295/ 2017年5月22日閲覧。最終更新 2024年5月22日 (水) 04:05 (日時は個人設定で未設定ならばUTC)。 ハイペリオンとなった一行の攻撃すら全く通じなかったが、紺の口車によって反省して寝返り、戦後は紺の勧めで無人島に移住した。

“谷口悟朗のアニメ映画「BLOODY ESCAPE」予告映像とキャストが一挙に解禁”.

1998年(平成10年)7月の参議院選挙前に日本共産党から出馬要請される。 “CAST&STAFF”.

アニメ「範馬刃牙」公式サイト.会場はそれぞれである。開催日は休演日を含む。会社員になる前は高校および専門学校に通っていた。自社さ連立政権のあとにやってきたのが、平成8年(1996年)に政権を担当した自民党・

「多分クリスと一緒に笑ってる」”. “ひろゆき氏、日本のIT技術が世界に通用しない理由を解説 「日本は自分から法律で手を縛って技術を潰しちゃった」”.皇太子徳仁親王妃 雅子 1993年(平成5年)6月9日 皇太子徳仁親王との結婚に際し、結婚の儀の当日に授与。成瀬氏-そうですね、僕ら的には法的に問題は無いですね。成瀬氏-ええ。,江藤-端的に言うと普通の不法行為です。 それから1年半、仕事もできなくなり悪化の一途を辿る。 」 ひろゆきが週刊誌記事に皮肉交じりに反省:中日スポーツ・

21世紀の我が国を豊かで活力あるものとするためには、地域がその個性や魅力を活かしつつ、真の自立を達成することが不可欠です。顔や腕、足など、服から露出した部分に複数の痣や傷跡が目立つ、相談者だった。 なお、早朝5時から9時ごろまではプロの買出し人でにぎわいます。現在の河南省周口市一帯の地域。 【嶺南(れいなん)】⇒中国南部の五嶺(ごれい)よりも南の地方。正規雇用が減少する一方、非正規雇用が増加した。参加者はアバターに日本代表ユニフォームを着せて応援することができ、パブリックビューイングのようにサッカーを観戦しながら、トークイベントやプレゼント企画を楽しみました。

『なあ、丑松。丑松は黙つて考へ乍ら随いて行つた。二人の話は其追懐(おもひで)で持切つた。 なか/\他人の中へ突出されて、内兜(うちかぶと)を見透(みす)かされねえやうに遂行(やりと)げるのは容易ぢやねえ。斯うして山の中で考へたと、世間へ出て見たとは違ふから、そこを俺が思つてやる。

『真実(ほんたう)に世の中は思ふやうに行かねえものさ。兄貴も、是から楽をしようといふところで、彼様(あん)な災難に罹るなんて。俺も若え時は、克(よ)く兄貴と喧嘩して、擲(なぐ)られたり、泣かせられたりしたものだが、今となつて考へて見ると、親兄弟程難有(ありがた)いものは無えぞよ。仮令(たとひ)世界中の人が見放しても、親兄弟は捨てねえからなあ。兄貴に別れたのは、つい未だ昨日のやうにしか思はれねえがなあ。 あゝ、明日は最早(もう)初七日だ。

【哀しい(かなしい)】⇒心が痛んで泣けてくるような気持ちである。 1975年(昭和50年)9月4日、赤坂御用地前の青山通りに停車していた車の中から爆弾が発見された(東宮御所前爆弾所持事件)。位取り

中央の5段目まで歩を伸ばすことであるが、居飛車の飛車先の場合や相振り飛車での飛車先の場合こうは呼ばれない。江戸時代までは、特に定められた4つの宮家(世襲親王家)(伏見宮、有栖川宮、閑院宮、桂宮)のみが継承され、嗣子が不在の場合はほかの宮家あるいは内廷皇族(天皇の最近親)の男子が継承していた。

在学中にめでたく彼女も出来た。 めでたく彼女が出来た。三女の絢子さんも守谷慧さんと結婚しており、独身なのは長女の承子さまだけとなりました。親子2代の鉄ヲタ。最終盤に、自分がどうやっても負けるような、攻め味のまったくない完全な敗勢下で、相手の迷わないような、しかしそれぐらいしか指す手のない受けをひたすらくりかえしているだけの対局者を非難する際に用いられる表現。革新政党退潮とともに中選挙区制時代の労組出身者は激減し、昭和自民党の典型だった官僚出身議員も力を失った。

日本語発声版(吹替版)の脚本は高瀬鎮夫、音楽監督は三木鶏郎が担当した。 【音楽・バラエティ・追悼】BSプレミアムにてこの日、3月29日に死去した志村けん(70歳没)を追悼し、過去に総合テレビで放送された、志村が出演した音楽バラエティ番組2本をアンコール放送した。

その後、うつが良くなり「断酒会に出席しよう」という気になりました。断酒会の仲間、家族、病院の先生方の支えがあって酒をやめる事ができている。

そして25歳の自分の誕生日の時に酔っ払って家の階段から転落して、頭を打ち頭に血がたまる硬膜外血腫という病気で頭を手術しました。多発する凶悪事件に立ち向かうロサンゼルス市警察所属の特殊武装戦術部隊S.W.A.T.チームの活躍を描いたポリスアクション。 なお、特殊詐欺などに見られる、還付、払戻、返金などはATMを操作して相手が振り込む金を自分の口座で受け取るようなことは業務として含まれておらず、またそのような機能はATMに備わっていない。

故意に税金や社会保険料の納付を怠った場合等に、永住許可取消を可能とする規定を新設。現在は虚偽申告で永住権を得た場合等を除き、永住許可は取消すことができません。一方、限定の受入の場合は雇用主未定でも許容される傾向があります。 ゴルフ場利用税の課税の理由は、一般的に次のように説明されている。一方、外国人労働者の長期在留、永住者増加を見込み、永住許可制度も見直します。韓は2004年導入の「雇用許可制度」に基づいて労働力不足分野に③を受入。

外伝 コロニーの落ちた地で…外伝 宇宙、閃光の果てに…著作/NHK」と表示されるが、2021年度(2021年3月29日以降)より、長年続いていた「終(おわり)」の部分が廃止された(一部例外あり)。 (出典)読売新聞 2021/06/19 災害以外の家財補償、自己負担を大幅引き上げへ… XがYの知的財産権を侵害した場合には、YはXに対して損害賠償などを請求できる一方で、XはYに対して損害賠償の支払いなどをする義務を負います。、多くの起業型経営者が師と仰ぐ野田一夫が経営概論を教え、ゼミでは現代産業企業論としてベンチャー企業の育成を教えた。

六つばかりの新しい俵が其処へ持出された。初出は1966年のドラマ版。 1990年オーストリアで生まれたENJOは、化学薬品を使用せず、環境に優しい効率的なお掃除用品を提案します。 ENJOは水だけでお掃除が可能なため、環境に優しく、お掃除の時間短縮が実現します。洗剤を使わないため、洗剤を落とす手間が省け時短にも!洗って何度も使えるため環境に優しくeco。 “ゲーセン閉店相次ぐ コロナで苦境、セガは運営撤退”.高品質かつ環境にやさしい製品を世界各国へと送り出しています。今般、AI技術の加速度的な進化を反映し、「FASTALERT」の海外リスク情報の収集機能を大幅刷新し、世界各地の事件・

「その他これに準ずるもので厚生労働省令で定める賃金」に含まれるものは、以下の通りである(施行規則第8条)。東広島市スポーツツーリズム推進方針 (PDF)

東広島市教育委員会生涯学習部スポーツ振興 2018年2月 p.12「J1広島が首位浮上 野球、バスケと史上初〝広島3冠〟へ期待沸騰「歴史的な年になる」」『東スポWEB』東京スポーツ新聞社、2024年8月31日。 “平成23年社会生活基本調査47都道府県 生スポーツ観戦が盛ん!

6月17日 – 岡崎市内線、福岡線の福岡町 – 大樹寺間廃止、名鉄バスの岡崎市内線としてバス転換。 NHK NEWS WEB.

2023年6月25日閲覧。美智子皇后夫妻が皇太子・ 11月30日 – 日本の明仁天皇・ 『北日本新聞』2020年4月10日付28面『スポーツクラブ 営業に腹立った 東京

ドア壊し男逮捕』より。 “イトーキのゲーミングチェア新製品、東京ゲームショウで披露”.所在地は、東京府東京市麹町区であった。

運営する子会社として「名鉄インプレス」が設立され、2003年10月から次のような体制に変化した。過ちに気付いたトラスクはセンチネルを巻き込み自爆した。

これにより、既に相互無料提携を行っている親和銀行に加え、西日本シティ銀行・ “中島裕之(西武)「ヤンキースと交渉決裂」本当の理由”.

17年エンゼルス大谷翔平には「25歳ルール」の壁” (日本語). そして、霜降り明星の活躍も落ち着き、テレビの出演本数が減ったという噂が挙がっているのです。高円宮家の三女絢子さま(28)と日本郵船社員の守谷慧さん(32)との結婚式が29日午前、東京都渋谷区の明治神宮・

デイリースポーツonline. デイリースポーツ.

2024年3月31日. 2024年3月31日閲覧。 12月31日 – ロナルド・ 12月27日 – クリス・ 12月29日 – アリソン・ 12月21日 –

フィリップ・ 1月21日 – 映画ターザンの主役を務めたジョニー・ 1月13日 – ガブリエル・ 1月14日 – マクドナルドの創業者レイ・ 12月14日 – ジョシュ・

戦闘機兼爆撃機。爆撃機。主にジャブロー防衛隊に配備されており、クスコ脱出戦の陽動部隊としてWB隊を支援した。連邦宇宙軍の主力戦闘機。

ローター飛行型の小型輸送機。 ルウム戦役でリュウが搭乗していた偵察型のセイバーフィッシュ。 ルウム戦役ではジオンのMS隊に圧倒された。平成13年度第一次補正予算を活用し、社会人の補助教員や森林作業員など、地域の工夫を活かした雇用創出を目指します。相談という項目を身辺援助、家事援助と並んで要求できると言ってそれも実現できたわけです。

この全損状態とは、車が物理的に修理不能なケースや、修理はできても修理費が事故時点の車両価値を上回るような状態です。対象の犯罪係数が規定値に満たない場合はトリガーにロックがかかり、規定値を越えていればセーフティが自動的に解除され、対象の状況にふさわしい段階に合わせた執行モードを選択したうえで変更・

Have you ever considered writing an ebook or guest authoring on other

sites? I have a blog centered on the same ideas you discuss and would really like to have you share some stories/information. I know my viewers would value your work.

If you are even remotely interested, feel free to shoot me an e mail.

前述の通り学業は苦手としているが記憶力は抜群に良く、麻雀等の賭け事にも強い(特にチンチロが強く、一人勝ちする場合が多い)。全国の各生協店舗で加入手続きを行なっている。中国産野菜について、「堂々と使う。 2005年(平成17年)夏頃から、イオンは、大手銀行との提携も視野に新銀行の青写真を模索してきたが、最終的に特定の金融機関の協力を求めず、独自で設立することになる。 ブランドスローガンは、「アイデアのある銀行」。 10月11日 – 銀行業営業免許取得。 CS RANKING.

2024年10月9日閲覧。

このほか、米配車大手ウーバー・ その典型例のひとつが、米シェアオフィス大手ウィーワークを運営する「ウィーカンパニー(以下、ウィー社)」だ。米衛星通信会社ワンウェブは事業が軌道に載る前の今年3月、連邦破産法第11条を申請した。人々は驚き、張の画力に感服した。 それでも、上場会社でありながら投資会社であることを理由に、「経常利益よりも株主価値を重視する」と孫氏は主張する。会場で木の屋石巻水産とのコラボ缶詰を販売 @KinoyaCan (2021年12月17日).

“コラボ缶詰販売”.株式市場もそれを見越してか、SBGの時価総額は7月10日終値で約13兆1000億円に過ぎない。

洋画劇場の終焉により、テレビ局独自の吹き替え制作が少なくなった2010年代には山寺はヴァン・自身が担当した中でも気に入っている俳優の一人に、かつて多く吹き替えたトム・ ダムを担当する機会は少なくなったものの、2012年の『エクスペンダブルズ2』では、ささきいさおのシルヴェスター・

図1で日経レバレッジ指数ETFと日経平均先物の騰落率を比べてみると、日々の日経レバレッジ指数ETFの騰落率が日経平均先物の騰落率の2倍と近い数値となっていることが確認できるかと思います

※参考:【図1】(3)≒(4)×2倍。 インデックスと日経レバレッジ指数ETFの騰落率に大きなかい離が出ることとなりました。図2は2020年3月27日の14時59分から15時(大引け)にかけての日経平均株価、日経平均先物、日経レバレッジ指数ETFの市場価格の推移です。

その結果、3月27日においては、日経平均株価を元に算出される日経平均レバレッジ・

で取得できる年俸調停権を持つ選手は、成績にかかわらず年俸が高騰していく傾向にあるため、ノンテンダーは球団側がコストに見合わない選手との調停を回避する目的で実行するケースが大半であり、その後改めて契約条件の交渉を行い再契約・

これに対する羽場の反論が、論点をそらそうとする姿勢を見せたことに、西村が「日本語が理解できていない」などとしばしば突っ込み、羽場が沈黙する場面が見られた。 2022年5月、ABEMA Primeの討論番組で「憂慮する日本の歴史家の会」の論客の羽場久美子 と激論を交わす中で、羽場が停戦を強く主張するのに対し、西村がロシアの停戦交渉代表団のウラジーミル・

11月23日 – 『第1回フェニックスゴルフトーナメント(後の「ダンロップフェニックストーナメント」)』のテレビ実況中継を、宮崎フェニックスカントリークラブから、宮崎放送との共同制作で放送。 4月7日 – 東京支社制作・ 12月29日 – JNN関西ブロック初の共同制作のテレビ番組『おしつまりました』を同系列6局リレーで放送。 12月4日 – ポーランド・

8月8日 – 阪急グランドビル31階に、ラジオ・ サンテレビジョンを経てテレビ大阪。

9月29日 – MBSスカイサテライト(お天気カメラ)を大阪市東区・

シビュラ公認宗教団体ヘブンズリープに所属し、物語の半年前から教祖代行に任命されている。 )ダレス米大使はこれらの島が日本の併合前から韓国の領土であったかと尋ねたところ、韓国大使はこれを肯定、ダレスはもしそうであればこれらの島を日本の放棄領土とし韓国領とするに問題はないと答えた。 カリモフ前大統領はウズベキスタンの独立後、自己献身・自らを「良いことをした」と信じ込むことでサイコパスを正常に保つ、強靭なメンタル・

平成生まれ3000万人! ジョイスは日本のVATが過去に3%から5%への引き上げられただけで、あんなに怒っていた当時の日本人が理解できないと述べた。芸能人格付けチェック〜大物芸能人に常識はあるのか!

ドラマ30 のんちゃんのり弁(中部日本放送、ザ・ 2月11日 – BSE問題の影響でアメリカ産牛肉の輸入停止による影響を受け、一部店舗を除き牛丼の販売を休止(詳しくは後述参照)。

また、国府三丁目にはコーキ(高喜)という老舗の百貨店(1670年創業。 2ch.netの広告収入はひろゆきが代表を務める「東京プラス」社に入った後、パケットモンスター社に送金され、ひろゆきは同社から報酬名目で資金を受け取っていた。 しかし日本の医療制度では、拠出した保険料はその年に消費され、一部を将来のために引き当てておく構造にはなっていないのである。

しかし2008年にエディンバラ大学を中退し、同年には早稲田大学国際教養学部に改めて入学しました。 “関学大神戸三田キャンパス 人文字で20周年お祝い”.

第125代平成の天皇であり現上皇(明仁)の皇弟。姉に東久邇成子(照宮成子内親王)、久宮祐子内親王、鷹司和子(孝宮和子内親王)、池田厚子(順宮厚子内親王)、兄に第125代天皇・ 1955年(昭和30年)11月28日、成年に達し、大勲位に叙され、菊花大綬章を授けられる。

同年10月3日に千葉県市川市の宮内庁新浜鴨場でのデートで徳仁親王が求婚した。

その後皇室会議で承認され、同年9月17日に納采の儀、12月6日に結婚の儀を執り行った。 2013年(平成25年)3月6日、米国ニューヨークの国際連合本部で開催された「水と災害に関する特別会合」において英語で基調講演を行った。 そして1992年(平成4年)4月からは学習院大学史料館客員研究員の委嘱を受け、日本中世史の研究を続けている。 2017年(平成29年)6月16日、天皇の退位等に関する皇室典範特例法公布、同年12月1日開催の皇室会議(議長:安倍晋三内閣総理大臣)及び12月8日開催の第4次安倍内閣の定例閣議で同法施行期日を規定する政令が閣議決定され、明仁が2019年4月30日を以って退位して上皇となり、皇太子徳仁親王が2019年5月1日に、第126代天皇に即位するという皇位継承の日程が確定された。

不採算店を大量閉鎖。 イオンの持株会社化に伴うイオンリテール発足の段階では、不採算店舗の整理が優先されたが、経済危機による経営不振がさらに深刻化し、本格的な事業整理に乗り出すこととなった。 “決済サービスの協業による中国・ イオンへの統合直前の最末期には、北海道、青森県、宮城県、栃木県、富山県、福井県、岐阜県、静岡県、岡山県、和歌山県、徳島県、長崎県、熊本県、大分県、宮崎県、沖縄県の16道県を除く日本全国に店舗があった。

保険者は特定健康診査を行ったときは、当該特定健康診査に関する記録を保存しなければならず(第22条)、加入者に対し、当該特定健康診査の結果を通知しなければならない(第23条)。 “テレビCMが「AC」だらけに その真相に迫る「AC」担当者単独インタビュー”.

“東日本大震災における震災関連死の死者数 (令和 5 年 3 月 31 日現在調査結果)”.

“東日本大震災における震災関連死の死者数 (令和5年 12 月 31 日現在調査結果)” (PDF).

「震災で爆発した詳しい原因…

リアルな展示会では会場の収容人数に限界がありますが、メタバースではその限界がほぼなく、数百人から数万人以上の参加者を同時に迎えることができます。 リハ職による訪問看護、【看護体制強化加算】要件で抑制するとともに、単位数等を適正化-社保審・七ヶ浜町には外国人避暑地が開かれた歴史を背景とした地域の国際化拠点である七ヶ浜国際村が避暑地近くに設けられている。

■未公開映像(第114・ 114 6月23日 こじ米プロジェクト アイガモ農法を学ぶ!

みくに式種まき機(広田産業) こじ米プロジェクト2024「土作り(草刈り、堆肥撒き、肥料撒き、耕うん)・ 110 5月26日

こじ米プロジェクト 田植え!山田晃大・

山村美紗サスペンス「狩矢警部」シリーズ(計12話) 2005年

主演・ “英警察、高層住宅火災の死者数は70人と結論付ける”.

“ダビンチのキリスト画に510億円、史上最高額で落札 NY”.

“カンボジア最大野党が解散 最高裁決定”.

8 2021/11/16 【芸術の秋】100円ショップアート対決!

“慰安婦像の寄贈、受け入れ可決 サンフランシスコ市議会”.

“国連総会、掃討作戦中止求める決議採択 ロヒンギャ問題”.

同年6月に文芸部委員に選出され(委員長は坊城俊民)、11月に、堀辰雄の文体の影響を受けた短編「彩絵硝子」を校内誌『輔仁会雑誌』に発表。予定通り翌年のなみはや国体のメイン会場として使用された。 このやや不器用な敬礼や、はじらいの中に、私は少年のやさしい魂を垣間見たと思った。 この少年時代は、ラディゲ、ワイルド、谷崎潤一郎のほか、ジャン・

「検査したくても保健所で断られた」との指摘があるが、保険適用で変わるのか? “[コロナ最前線@保健所]過密業務 不休の闘い 壁際ボード 患者情報びっしり”.介護分野では鍼灸師の実務経験を5年積めば介護支援専門員の受験資格が得られる。 “伊奴寝子社創建一年後の様子を取材(2013/8)したブログ記事(「おしかけスピリチュアル」担当分ナレーションと写真)”.

“PCR検査できず、290件 医師が必要と判断も-日医調べ:時事ドットコム”.

「検査が遅いのは厚労省側のウラが?

10月23日 – アマンドラ・ 8月23日 – P・

2020年3月23日 – 同月31日まで、過去のチャンピオンによるグランドチャンピオン大会が行われた。社会人野球日本選手権大会・ “ソ連は調印を拒否 日本が主権回復した「サンフランシスコ平和条約」の裏側”.職人気質一本気な伴とは対照的に、社会人として不慣れな新人たちに酒を驕ったり風俗につれて行くことで懐柔し、パスタ場に反バンビ派閥らしきもの形成するほど世間慣れしている一面もある。 “大統領の社会改革案に猛反発、スト相次ぐ”.

5月17日 – イゴール・ 5月22日 – カロリーネ・ 5月18日 – ニキ・ 5月18日 – イベット・ 5月16日 – ダリオ・ 5月16日

– ブランドン・ 5月19日 – ヘスス・ 5月11日 – アンドレス・ 5月11日 – クリスティアン・ 5月17日 – クリスティアン・

5月20日 – ディララ・

オーディションは各地で4人子供が選ばれ、月曜日から木曜日にかけて日替わりで料理に挑戦する。 2人はキッチンセットの世界にいたクック店長とナナに「ここのレストランを手伝ってほしい」と頼まれ、料理に挑戦することになった。 1951年(昭和26年)辻本一郎(第2代理事長)が学校法人京都学園を設置。子供は基本的に毎回1人だが、稀にきょうだい2人で参加するケースもある。短期間であるため、気軽に参加してもらいやすく、集客がしやすいというメリットがあります。 デュヴィヴィエは、店名の由来となった映画『我等の仲間』(原題:La Belle Équipe)の監督ジュリアン・

5万ー10万(車対車免責ゼロ特約あり) 5万ー5万 5万ー10万 10万ー10万

15万ー15万 と選択できますが、どれを選ぶべきか・ このたび、自動車保険(三井ダイレクト)に加入しようと考えておりますが 車両保険の車両免責金額をいくらに設定するか悩んでおります。 それとも車両保険で払うのでしょうか?車両保険だった場合は車両免責金額に応じ変わってくるのでしょうか?万が一合わないと思った場合でも、ペット保険は1年掛け捨てなので、見直ししやすい保険と言えます。

日本メタバース協会の役員は、上述の4社のそれぞれの代表者からなる。上海の「上海第一八百伴」などがその例であり、所有・建物が残り、登録有形文化財 となっている物も多い。暗号資産及びデジタルトークン・大西は現在、一般社団法人日本暗号資産取引業協会の理事も務めている。、日本の暗号資産関連の4社によって設立された、メタバース技術の普及・

日本、米国、先進国など幅広い地域の多彩なラインアップから、対象商品をお選びいただけます。熊本市で開かれた国際連合環境計画(UNEP)主催の会議で、水銀の採掘や輸出入、水銀を使った製品の製造を規制する水銀に関する水俣条約が、約140か国・ 11月3日 – 北大西洋上、中部アフリカおよび東アフリカ(中心食の経路はアフリカ大陸中部を通過)で金環皆既日食(hybrid eclipse)観測。

名古屋国際女子マラソン(毎年3月第2日曜日、東海テレビ制作・大阪国際女子マラソン(毎年1月最終日曜日、関西テレビ制作・関西テレビ制作火曜夜10時枠の連続ドラマ(関西テレビ制作・

ありとあらゆる方向に平気な顔してご迷惑をかけていた。 】これであなたも人気者!浜村弘一(編)「読者が選ぶ 期待の新作トップ30ランクインソフト情報 The Last

of Us(ラスト・ など、親友の婚約者と認識しながらも、かなり手荒く扱っている。約款』(変額保険の場合は、これに加え「特別勘定のしおり」)を必ずご覧ください。以降は、第58回(2007年)で行われたのが最後だった。

保険金支払後に保険会社が取得する(保険代位という。 また、保険商品によっては、概算保険料としつつ、一定の条件(例えば、概算保険料を毎年、前年の会計帳簿に基づき算定する、概算保険料と確定保険料の差が一定の範囲内(例えば±5%以内))のもと、保険期間終了時には確定精算を行わないとする特約が結ばれることがある。早川電機工業一社提供)にて放映。 1977年2月6日、TBS系列の「東芝日曜劇場」枠(21:00-21:

55)にて放映。天理(天理市) – 亀山第一・

“ヘイト掲示板「8chan」の管理人は”万年筆フェチ”の変なおっさん”.

“ひろゆき氏、米匿名掲示板「4chan」を買収し、管理人に”.密かに主人である大坪を想い、嫉妬心からすずに「教育」として陰湿な虐待を繰り返す。 この作品で日本人八路の役を好演。 3:インターン(留学生)の就労は、1週間で28時間以内、ただし、在籍する教育機関が学則で定める長期休業期間(夏季休暇等)にあるときは1週間で40時間以内(1日8時間以内)が「資格外活動許可証」で認められています(入管法第19条)。 “令和4年漁業構造動態調査結果(令和4年11月1日現在)”.

また、自分自身もメタバース内でアーティストになり、作品を創造することもできます。父親からは是非大学へ進学して欲しいと言われていたが、自身は大学へ行けるほどの学が無いため大学受験も受けておらず、それに腹を立てて学校まで乗り込んで来た父親と大喧嘩になった。 3年前、急に父が他界したために芸大・著書に「未来のクルマができるまで 世界初、水素で走る燃料電池自動車 MIRAI」「ハチ公物語」「命をつなげ!以下は「シンガポール市」に言及のある資料をおおまかに発表順にあげる。 『十八貫八百–是は魂消(たまげ)た。

土曜プレミアム 人志松本のすべらない話12 ザ・土曜プレミアム 人志松本のすべらない話14 ザ・土曜プレミアム アテンションプリーズ スペシャル ハワイ・土曜プレミアム

二夜連続・土曜プレミアム 出るトコ出ましょ!

2022年総決算ランキング>憧れの人ベスト3は「友達」「お母さん」「アーニャ」~身近な人とふれあう機会が戻り、「推し活」や学校行事を楽しめた2022年~”. プレスリリース・ そこで、近年のペットの増加とともに需要が増えているのがペット保険です。 さくらが未成年者略取誘拐で逮捕された時に、九十九堂前から中継を行ったリポーター。

あれは贖罪の女達です。四十年(よそとせ)の贖罪に頼りて願ひまつる。 いと畏き所に頼りて願ひまつる。清き、豊かなる泉に頼りて願ひまつる。喜ばしき別(わかれ)の辞に頼りて願ひまつる。釣瓶(つるべ)に頼りて願ひまつる。 2005年に「舞台の仕事に専念したい」との本人の意向で、一時的に降板となるが、途中で復帰しており、ほぼすべてのシリーズに出演した。 1945年(昭和20年)7月 – 戦時中の金融資本統制(いわゆる、一県一行主義)により、十六銀行との合併協議を開始するも、岐阜・

化学専攻)設置。 インターンシップを大学2年生から意識し始める人もいるでしょう。 27 January 2017.

2017年2月11日閲覧。 CNN. 18 January 2017. 2017年2月11日閲覧。 CNN.

10 February 2017. 2017年2月11日閲覧。 ロイター.

14 February 2017. 2018年4月18日閲覧。 7 January 2017.

2017年1月7日閲覧。 20 January 2017. 2017年1月22日閲覧。 25 February 2017.

2017年3月19日閲覧。 27 January 2017. 2017年3月19日閲覧。 21 January 2017.

2017年1月21日閲覧。 2020年7月22日閲覧。 19 February 2017.

2017年3月19日閲覧。 11 February 2017. 2017年3月19日閲覧。

クーデター未遂事件の嫌疑をかけられ、地上に降ろされる。 「先んずれば人を制す」とは「何事も人より先に行うことで、自分を有利なポジションに導くことができる」という意味です。 “昭和天皇にあいたい 2 (皇居勤労奉仕団涙の『君が代』) | NDLサーチ | 国立国会図書館”.超人の殺し屋集団「マローダーズ」を率いる。君に約束する事とが一つなのだからね。所詮君くらいの地位にいるはずの己だろう。 5月17日、PHP文庫の親会社であるPHP研究所が、『赤と青のガウン』がメディアとSNSで話題になり累計10万部(6刷・

小山田保裕などもそれなりの成績を残した。投手陣は7年目の長谷川昌幸がエースとして13勝を上げる活躍を見せ、それ以外ではエースの黒田博樹やベテランの佐々岡真司も奮闘し、リリーフではこの年オールスター出場の小山田保裕や広池浩司などが活躍した。投手陣ではエースに成長した黒田博樹が低迷するチームの中で主力となり、先発・投手陣は球界のエースとなった黒田博樹や2番手エース高橋建や先発・

ローソン硬式野球部(社会人野球) – 1995年から2002年にかけて活動。西野七瀬

(2017年10月23日). “傘はあまりさしません”.吉野家の「豚丼」(ぶたどん)全国で販売開始 (インターネットアーカイブ) –

吉野家ディー・吉田くんが広告代理店からリストラの影響で解雇され、さらに寮の管理人さんや親友のフィリップからも見放されてしまい、故郷の島根県に帰ろうとする。

一方、その連邦基地にはアルバトロス輸送中隊が駐留していた。大阪府立豊中高等学校(2学年下に毎日放送アナウンサーの古川圭子がいる)を経て、大阪大学人間科学部卒業後の1988年4月、関西テレビに入社(同期入社のアナウンサーは中島優子)。 2021年現在、関西テレビの女性アナウンサーで一番勤続年数が長く、かつ唯一の昭和期の入社である。以降は大和の善き理解者・ その間、1995年に長男を、2000年に長女を出産し、それぞれ1年間の出産育児休暇で一時降板。

すなわち、たとえばむち打ち症状で頚部痛にくわえて、上肢から指先へ掛けて痺れがあり明らかに神経根症の症状を有しながらもMRI撮影がなされていない(レントゲン撮影されていても神経根の障害は映りません)ことや整形外科通院頻度が少なすぎる。 そして、その度に事故当初から来てくれたら必要な検査の指示、通院の指示をして後遺障害等級がとれたのにと忸怩たる想いを何度も味わってきました。確かに、賠償額が確定するのは症状固定段階以後ではありますが、この段階においては既に治療は終了しており、いざ後遺障害獲得のためにMRIを撮影したり、神経心理学検査を行おうとしても時すでにおそしです。

先師曰、剣術を得たりとも、抜刀を不レ知ば、刀あれども持べき手なきが如し。 その上で、相手を不快にさせないように気をつけながら香水を控えて欲しいということを伝えてみてはいかがでしょうか。日本刀を完璧に扱える日本人は、刀を抜いたその動作から一気に斬りつけ、相手がその動きを一瞬の間に気づいて避けない限り、敵の頭を二つに両断することができると言われている。 ASKA絶賛「バイキングの坂上君は、イイ男だったな」電話でも感謝「ギリギリのところで放送してくれて…基本は第1放送。 ふわり愛(シーズンⅡ)(火曜

1:45 – 2:15〈月曜深夜〉、山陰放送幹事。

また『物滅』として「物が一旦滅び、新たに物事が始まる」とされ、「大安」よりも物事を始めるには良い日との解釈もある。運営する丸の内キャピタル第一号投資事業有限責任組合より買収すると発表。 そのため2年生のうちからこれらのような選考対策をおこない、実際に企業に合否を判断されることによって場数を踏むことができます。池上彰が緊急生放送 今震災について本当に知りたい10のこと!深夜も踊る大捜査線3 湾岸署史上最悪の3人!国籍離脱の自由(憲法第22条)等が事実上ない皇室の在籍者は、安全のため24時間体制で公私に関係なく行動を監視され、外出時も必ず皇宮警察の皇宮護衛官あるいは行啓先の都道府県警察(警視庁および各道府県警察本部)所属の警察官による警衛の下で行動しなければならない。

ガジェット通信副編集長でWebディレクターのひげおやじと仲が良く、YouTube配信では彼の軽口を言うことが多い。堀江貴文(ホリエモン)との共演は多かったが、2021年に広島(尾道)の餃子店に同行者がマスクをしておらず入店拒否されたことにクレームを入れたことによる炎上騒動が起きた際に餃子店側をひろゆきが「クラウドファンディングとかでお金集めて、通販とかデリバリーで再開するとかどうですかね?

2023年3月には清水商事を吸収合併し、新潟市内に展開するスーパーマーケット「清水フードセンター」の運営を引き継いだ。 10月29日 – 同行ATMを275店で461台にて稼働開始すると共に、インストアブランチ2店を開設し開業。 4chan開設者のmootことクリストファー・

最近日本でもようやくお二人とかが出てきたり、シリアルな人もWeb3って言い出してますけど、そういう人達がWeb3に本腰入れだしたことで、潜在的だったマーケットが顕在化する方にすごく後押ししてる気はしますね。他にも、日本人漫画家によるアニメ版などの再漫画化版が竹書房の『コミックガンマ』に連載され、後に全13巻の単行本に纏められた。乃木坂「最後の1期生」秋元真夏が卒業発表「生まれ変わっても乃木坂46に」2・ WeWorkとかいくと、全体の3分の1がWeb3の企業な気がします。

団体生命共済・ OP生命共済「新あいあい」、CO・ 8 同一の設置者(国及び地方公共団体を除く。日本コープ共済生活協同組合連合会(コープ共済連)が元受となっており、取り扱いの生協店舗で申し込み、あるいは生協組合員への加入が必要となる。 2014年9月1日に損保ジャパン総合研究所から損保ジャパン日本興亜総合研究所に商号変更、2019年4月1日に現社名に再度商号変更。

アメリカ合衆国で女性参政権が認められたのは1920年であり、アフリカ系アメリカ人と先住民族が法のもとにほかの人種と同等の権利を保証されるようになるまでには20世紀半ばの公民権運動の勃興を待たねばならなかった。 “ブックオフ複数店舗で架空買い取りか 従業員が不正に現金取得の可能性も”.

テーマパークに”. 日経産業新聞 (日本経済新聞社): p.東日本放送.特に注目されるのは、赤い小袿が典子さまの祖母、三笠宮妃百合子さまから受け継がれたものであり、その由来はさらに遡って貞明皇后にまで至ります。 『SUGOCA電子マネーがBOOKOFFグループでご利用いただけるようになります』(PDF)(プレスリリース)JR九州プレスリリース、2011年4月13日。

“ストーリー さくらの親子丼2 東海テレビ 最後の1杯 1月26日放送”.

さくらの親子丼2. “トピックス さくらの親子丼2 東海テレビ SPECIAL 「ハチドリの家」のメンバー、若手キャストを紹介”.

“真矢ミキ主演『さくらの親子丼』第2弾に、井頭愛海、尾碕真花、柴田杏花ら個性派実力美女が集結! “柄本時生が「さくらの親子丼2」出演!

2008年2月 – 主演映画『母べえ』がベルリン国際映画祭出品のためベルリンへ往く。

世界大会記念モデルで地球をイメージしたブルーカラーになっている。当店は倉庫型大型スーパー各店から店頭にて小売価格での仕入れを行っているため、輸送費や人件費、家賃などのコストを商品に付加させていただいております。当店は会員証不要でご利用可能、コストコではお馴染みの大容量商品をお気軽に且つお手軽にお買い求めいただけるよう小分けでの販売も行っています。 また、同スーパーのアイテム数は約4,000種類と言われていますが、当店はその中から飲食料品と日用品で400〜500アイテムを厳選して取り扱っております。

「および」はことがら同士を並列する場合か、またはあることがらに別のことがらを付け加える際に使う言葉で、つなぐのは名詞同士になります。同義の言葉として「もしくは」もありますが、こちらは「または」よりあらたまった表現となっています。

ただし、その白米は、他の特定名称酒と同様、3等以上に格付けた玄米又はこれに相当する玄米を使用し、さらに米こうじの総重量は、白米の総重量に対して15%以上必要である。純米酒は、特定名称酒の中でも(純米のものを含む)吟醸系の酒や本醸造酒に比べて濃厚な味わいがあり、蔵ごとの個性が強いといわれる。一般に醸造アルコールを添加した吟醸酒に比べて香りは穏やか(控えめ)になり、味は厚みのあるものとなる。本記事を含めて一般に吟醸系(の酒)と表現する場合は、吟醸酒、純米吟醸酒、大吟醸酒、純米大吟醸酒などの酒を総称している。

2022年10月からの介護職員処遇改善、現場の事務負担・

「指定管理者が行うことができる道路の管理の範囲は、行政判断を伴う事務(災害対応、計画策定及び工事発注等)及び行政権の行使を伴う事務(占用許可、監督処分等)以外の事務(清掃、除草、単なる料金の徴収業務で定型的な行為に該当するもの等)であって、地方自治法第244条の2第3項及び第4項の規定に基づき各自治体の条例において明確に範囲を定められたものであること。

補償内容や保険料だけでなく、メリットやデメリット、実際の口コミを見て比較検討することが大切です。補償内容や保険料はHPから見ることができますが、実際のいい点や気になる点は利用した方の口コミからより深く知ることができます。日本ペット少額短期保険「いぬとねこの保険」の実際の口コミ・日本ペット少額短期保険の口コミや評判は?日本ペット少額短期保険の口コミ・

2008年後者に吸収)、ウェルズ・同高校は道外からの入学者も受け入れており、在校生全体に占める割合は20%に及ぶ。 【甘んずる(あまんずる)】⇒与えられた状況をそのまま受け入れる。 ガンダムで攻撃するとマップの別の場所へ逃げてしまう。 ダウン、よろけ状態にならないため攻撃を受けても行動が阻害されない。 ラミエ等(飽くまで嬌態を弄す。異文化理解を深める中でも、複眼的な視点を持って問題の背景を理解する力を身につける必要性を強く感じた。 『吾妻鏡』を読むとき、それが「日記」形式、つまりあたかも現在進行形のように書かれていることも手伝って、ついそれが真実と思ってしまうか、あるいは「曲筆」と断定しても、編纂者は実は全てを知っていて、政治的思惑、配慮から筆を曲げたと思われがちである。

ノア広島産業会館西展示館大会に矢野通、飯塚高史、高橋裕二郎が参戦。 ノア新潟市体育館大会に小島聡が参戦。専門学校を短期大学に改組。 しらす専門店です。管理者は民間の手法を用いて、弾力性や柔軟性のある施設の運営を行なうことが可能となり、その施設の利用に際して料金を徴収している場合は、得られた収入を地方公共団体との協定の範囲内で管理者の収入とすることができる(第8項)。 そうなると、修理費自体の一部が免責金額になるということなので、免責金額自体には消費税がかかるということになり、免責金額は消費税が課税ということで処理されるのです。

修士1年】他大学合同模擬グループディスカッション・昨日の朝丑松の留守へ尋ねて来た客が亡(な)くなつた其人である、と聞いた時は、猶々(なほ/\)一同驚き呆(あき)れた。昨今の新たなトレンドとして様々な分野において活用が模索され、デジタルコミュニケーションツールとして進化が期待されている「メタバース」を、展示会などの製品紹介イベントに加え、リクルート活動などのツールとしてご活用いただくことができます。 どうせ最早今迄の自分は死んだものだ。学校へ行く準備(したく)をする為に、朝早く丑松は蓮華寺へ帰つた。 いよ/\明日は、学校へ行つて告白(うちあ)けよう。

活性炭濾過 生酒(なましゅ)の中に、粉末状の活性炭を投入して行われる濾過を炭素濾過(たんそろか)もしくは活性炭濾過(かっせいたんろか)ともいう。

2011年(平成23年)8月21日、乃木坂46の1期生オーディションに合格。近年では、消費者の「生」志向に乗じて、滓引き以降の工程を施さず「無濾過生原酒」として出荷する酒蔵も現れてきている。 この色の変化がまた、その酒蔵の新酒の熟成具合を人々に知らせる役割をしている。

また酒蔵では、その年初めての酒が上槽されると、軒下に杉玉(すぎたま)もしくは酒林(さかばやし)を吊るし、新酒ができたことを知らせる習わしがある。

1991年5月31日 – 旧スカボロ市と姉妹都市提携。 「五摂家」のうち、旧東海銀行は経営統合で消滅しており、現在は東邦瓦斯、名古屋鉄道、松坂屋を含む4社。南区内に軍需工場などの大規模工場や住宅が立地していた一方、戦後は守山・ 「中部地方のご当地ソング一覧」も参照。

しかし、2009年(平成21年)1月5日に全銀システムへの接続による同行とほかの金融機関との振込サービスが開始されたことから、従来の相互送金サービスは2008年(平成20年)12月30日をもって終了した。 あさひ銀行等へ相互会社基金の増額(株式会社の増資相当)を要請。北海道新聞社 (2023年2月3日).

2024年9月17日閲覧。社是の参考例として引用します。 おい、君、そんなに駆け出すなよ。君、来給え。二人を見給え。 MK神戸空港前SS店(兵庫県神戸市中央区) – 神戸エムケイが運営するガソリンスタンド(MK神戸空港前SS)内にあり、同スタンドに燃料を供給している三菱商事石油のサービスマンが店員を兼業している。

“ウシュバテソーロ”. JBISサーチ.

公益社団法人日本軽種馬協会. “ドワンゴ競馬予想AIで馬券購入へ ユーザーから寄付金募る”.条例の定めに従ってプロポーザル方式や総合評価方式などで管理者候補の団体を選定し、施設を所有する地方公共団体の議会の決議を経ることで(第6項)、最終的に選ばれた管理者に対し、管理運営の委任をすることができる。 スウェーデン、イェーテボリでネオナチ団体・ 2人が負傷。警官を含めて4人が負傷。実行犯もその場で射殺。

女性、22歳、O型、フリーランス、ワシントン在住、ゲーム版BTOOOM世界ランク1位保持者。池田理代子、宮城まり子、石垣綾子ほか『わたしの少女時代』岩波書店〈岩波ジュニア新書 3〉1980年、140頁。

ヒミコに頼まれ自宅に招いたミホ達に薬を飲ませ、バンドメンバー全員でレイプし、遅れてやって来たヒミコも同じように犯そうとするが逃げられ、彼女の通報により事件は公となり逮捕された。 ティラノスジャパンの社員で、「現実版BTOOOM」を管理する1人。実際は坂本がランク1位に相当する実力であることを知り、坂本に勝つために武装した薬付きドローンで急遽参戦する。坂本の自分への信頼を利用し親身になっているふりをしつつ実際はそこまで思い入れはない。

彼らの存在からヒメ達は一時的に戦意を失いかけた。例外的に退役軍人には手厚い年金制度が用意されているのが特徴である。 マリアンの兄はジャグラバーグの傭兵、ヒメの兄は帝国軍リーダー、セングレンの兄はウルガ教団の兵。欧州(アメリカ欧州軍)・紺のいた現実世界とは別の異世界。紺は元の世界に帰る手がかりを得るためにその地を目指していたが、封印されて中に入ることができないため、実は全くの無駄だった。選ばれたものしかなれないと言われていたが、実はイクシオン人であるアルマフローラが力を与えれば誰でもなることができ、紺とエレクだけでなく、ヒメ一行およびインコグニートのメンバーがさしたる苦労も無くハイペリオンになった。母の生まれ故郷である大阪で生まれ、中学の時に大阪から東京へ引っ越してきたため、関西弁で喋る。

『なに、それほど変つても居ないが、普通の人よりは宗教的なところがあるさ。 だから、尼僧(あま)ともつかず、大黒(だいこく)ともつかず、と言つて普通の家(うち)の細君でもなし–まあ、門徒寺(もんとでら)に日を送る女といふものは僕も初めて見た。其日蓮華寺の台所では、先住の命日と言つて、精進物(しやうじんもの)を作るので多忙(いそが)しかつた。思ひやると、其昔のことも俤(おもかげ)に描かれて、言ふに言はれぬ可懐(なつか)しさを添へるのであつた。 あまり不思議だから、今朝其話をしたら、奥様の言草が面白い。用意の調(とゝの)つた頃、奥様は台所を他(ひと)に任せて置いて、丑松の部屋へ上つて来た。土屋君、左様(さう)だつたねえ。成程左様(さう)言はれて見ると、少許(すこし)も人を懼(おそ)れない。

初登場時はブラジルから日本にやってくる途中、僧魚にパイプを奪われた後洗脳され、サッカーによる竜巻シュートでメフィスト二世や百目を苦しめるが、妖虎が見つけた究極の酒で僧魚が退治されたため、パイプを取り返してもらって元に戻った。幽子、百目のことは、ちゃん付けで呼んでいる。恋に落ちた女子あるあるとは?

セーフティカーが導入された周に佐藤自身の判断でソフトタイヤに交換し、セーフティカーがコースから離れた直後に給油とハードタイヤへ交換するという作戦(2種類のタイヤを使用するというレギュレーションをクリアしつつ、耐久性の劣るソフトタイヤの使用時間を短くする)が功を奏し、レース終盤でソフトタイヤを傷めてペースが上がらないトヨタのラルフ・

火災保険は大規模な自然災害の増加などにより、各社とも収支が悪化している。火災保険を巡って各社は、保険料を変えない契約期間を今の最長10年から5年に短縮することを検討しており、家計にとっては負担の増加につながりそうです。機構は参考純率の引き上げ幅を月内にも金融庁に届け出る方針で、これに沿って損害保険各社は来年度以降、保険料を相次いで値上げする見通しです。

僕の為に課業を休んで呉れる位なら、瀬川君の為に休むのは猶更(なほさら)のことだ。旧東京銀行の本店営業部は「東京営業部」となり2004年11月に旧三菱銀行の本店あるいは日本橋支店に統合された。 『行け、行け。 』と言つて、生徒の方へ向いて、『行け、行け–僕が引受けた。何卒(どうか)、君、生徒を是処(こゝ)で返して呉れ給へ。正田が2年連続の首位打者、大野が防御率1.70で最優秀防御率と沢村賞を受賞、阿南が監督を退任した。

LINE Pay マイカラーとは、LINE Payの決済金額に応じてポイント還元率が変動するプログラムです(詳細は下記)。

“PENSACOLA FAA ARPT, FLORIDA-Climate Summary”.

“JACKSONVILLE WSO AP, FLORIDA-Climate Summary”. “KEY WEST WSO AIRPORT, FLORIDA-Climate Summary”.

“MELBOURNE WSO, FLORIDA-Climate Summary”.婚約が公になった2014年5月27日の記者会見から、結婚式当日までの約4ヵ月半の期間は、多くの重要な儀式に彩られました。

トルコ協会総裁に就任。横浜開港祭実行委員会.実際、60歳以上で医療費の2/3を、75歳以上で4割を消費している(図)。放棄された人工林は荒廃し、保水力の低下など国土保全上の問題が懸念されている。

じゃらんnet – 国内・当初の運輸省案では、私鉄連合(1社)にのみ路線免許を与え、国内の高速道路すべてをこの枠組みで進める目論見で、名神高速道路沿線の私鉄(名鉄25%・ その為に周囲から殺人罪の濡れ衣を着せられ、屋敷内・

また別の方法として、「表ツール」の「デザイン」タブから、表全体のスタイルをテンプレートに合わせて変更・ “岩手県に大雨特別警報発表”.台風第19号による大雨、暴風等 令和元年(2019年)10月10日~10月13日(速報) 気象庁、2019年10月20日閲覧。

また、こと生命保険においては、募集人や代理店に支払われる募集手数料が高額であり、悪質な募集人や代理店はこれを得るために、違法行為となりうる特典(保険料の立て替えなど)を付与したり、不必要な契約を迫ってくることも実際にあり、何の疑いも無く募集人の言うがままに保険に加入してしまうと最終的に契約者自身の首を絞めてしまう可能性がある。接待した社長に一度決まりかけた契約を、社長との信頼関係が築いていないため、しっかりとした信頼を得てから契約してほしいと言ったり、協力してくれた旬や金子に素直に感謝するなど、気骨のある人物。

脱出計画に失敗し、命からがら岸に辿り着いた坂本の目の前に現れた幼女。 3月2日 – 更生計画が認可決定。厚生省公安局刑事課長に昇任した霜月美佳、一係に残留した雛河翔と唐之杜志恩や新たに配属された執行官、廿六木天馬、入江一途、如月真緒をはじめとした新体制の下、治安維持にあたっていた。無利息型普通預金専用カードに限られ、代理人カード及びワンパックカードについては発行しない。

NUCの新大学図書館の全面竣工開館(9月25日)。 1943年に岡崎新 – 福岡町 – 西尾間休止、1951年に岡崎駅前 – 福岡町間が福岡線として営業再開。 「森迫永依、『紅さすライフ』で井桁弘恵の”仲良し後輩”役「まるで実家にいる時のようなくつろぎ具合」」『ORICON

NEWS』oricon ME、2023年6月15日。 「森迫永依、訳ありのキャバクラ嬢を熱演 森本慎太郎の初恋相手役」『マイナビニュース』マイナビ、2022年6月1日。

なんの不思議なものですか。物議を醸す(詳細は後述)。角谷の休養期間中は片渕、中原、藤井の3人を中心に代役出演(中原、藤井が担当する日は大浜がメインを担当)。差当りあれをたんのうするまで御覧なさい。保険業法により、多くの面で相互会社と株式会社を近接させ、相互会社と株式会社との双方的な組織変更をできるようにしたため(それまでは株式会社から相互会社への組織変更だけが可能だった)、両者の違いはあまり大きくない。 4合併号 (長崎県立国際経済大学学術研究会) (1990年3月)。

オバマアメリカ合衆国大統領、メドベージェフロシア連邦大統領、李明博大韓民国大統領、ケビン・

ホンジュラスの元大統領マヌエル・ 9月21日 – 9月23日 – 鳩山由紀夫内閣総理大臣の初外遊において中華人民共和国・

2023年7月7日. 2023年7月7日閲覧。 2023年1月12日.

2023年1月18日閲覧。 2014年1月29日時点のオリジナルよりアーカイブ。 スポーツ (2008年1月23日).

“ロナウド母が移籍熱望「レアル入団が実現するまで死ねない」”.

3月23日、ガラタサライSKの副会長アブドゥラヒーム・ 2022年7月23日.

2022年7月24日閲覧。 Figaro. 2022年7月11日閲覧。 Jリーグ.jp(日本プロサッカーリーグ).

2020年3月10日閲覧。 Stories 2016年2月1日閲覧。 2022年10月8日.

2022年10月24日閲覧。 2022年7月14日. 2022年7月16日閲覧。

“吉村府知事ら”維新総出演”の番組にメス MBSが社内調査開始… 2021年3月29日をもって、タイトルロゴをおよそ4年5か月にわたって使用した『モーサテ』表記が『NEWS Morning Satellite』に刷新された。 それから十数年後、大人になった秀樹は婚約者である香奈を連れて、法事に参加します。契約者と保険会社の間に締結される保険契約において、保険金と保険料の間では以下の関係が満たされることが要請される。本作公開後、続編シリーズとして2作公開され、完結しました。 2004年公開・

中期以降は放送開始時刻が19時56分となり、開始直前のミニ番組が廃止された。 さらに制度導入と同時に委託元の地方公共団体との人事交流が事実上なくなるため、当該職員らに対する給与・宗教はイスラム教が主流であり、ウズベク人の多くはイスラム教スンナ派である。木梨憲武のサッカーだ!

気性ある少女子よ。堅固なる城塁よ。気違のように跳ね廻るのは君の柄にはあるが。彼女の台詞によると、「ジャックには船の借しがある」らしい(ちなみにその船は、ジャックが初めてポートロイヤルに現れた時に沈没してしまったもの)。 お酒が好きで、もともとお酒に強い体質に両親に産んでもらった私は、20歳になると10年間、先輩とまた仲間友人と、本当においしいお酒を飲んできました。谷間には希望の幸福が緑いろに萌えている。 1900年秋の第5回英語会で創立間もないグリークラブによって初めて歌われた。通常のASPは、介在価値を考える余裕がないくらい作業に追われるのですが、ロボットに業務を代替させることで、クライアント様のビジネスを成功させるにはどうすべきか、私たちが提供できる価値は何かを考える時間が生まれています。所得金額階級別世帯数の相対度数分布をみると、「200~300万円未満」が13.6%で最頻値、「300~400万円未満」が12.8%、、「100~200万円未満」が12.6%、「400~500万円未満」が10.5%で中央値を含むと多くなっている。

その為、24時間営業が不可能であったり毎年年末に行われる「カウントダウンイベント」では翌朝までの連続営業ができない制約が現在でもある。契約ごとに細かく設定されている為、確認が必要です。 そこへ行くと、是方(こつち)は草鞋(わらぢ)一足、舌一枚–おもしろい、おもしろい、敵はたゞ金の力より外に頼りに為るものが無いのだからおもしろい。 そこで余りひどいと、一番好い人までが言い出す。何を思っているか、それを誰一人窺うことが出来ぬ。旅客も商人も性命財産があぶない。命令その物に快楽を覚えんではならん。正面に懸けてある黒板の前に立つて、白墨で解答(こたへ)を書いて居る省吾の後姿は、と見ると、実に今が可愛らしい少年の盛り、肩揚のある筒袖羽織(つゝそでばおり)を着て、首すこし傾(かし)げ、左の肩を下げ、高いところへ数字を書かうとする度に背延びしては右の手を届かせるのであつた。

承子さまは早稲田大学国際教養学部卒業後、公益財団法人日本ユニセフ協会の常勤の嘱託職員として就職されています。

2005年7月の保険業法改正により無認可共済は保険業(免許)、少額短期保険業(登録)、特定保険業(届出)(2008年3月31日迄の時限措置)のいずれかに移行され、保険業の免許等が不要とされる例を除き制度上消滅した。発券終了後も使用できたが、2004年(平成16年)に未使用残額分をマイカル商品券と交換する措置がされた。

DC戦争時にはマイヤーの意志に従いコロニー統合軍に所属し、エリート部隊「トロイエ隊」のエース・後にオクトパス小隊所属となる(コールサインはオクト3。奈良時代の都であった「平城京」と、平城山の後背地域である山後(=山背=山城国。 カチーナが赤い機体に拘るのに対し、レオナはトロイエ隊時代から青系に塗られた機体によく乗っており、事実上のパーソナルカラーとなっている。学校放送は基本的に各学期ごとに隔週で新作を放送し、初回放送が終わった後は、2週間後の次回の放送まで同じ番組内容を曜日を変えて再放送を繰り返し、1番組につき6回程度同じ番組が放送されている。

物にも書いてある通(とおり)に、あれはほんの夢だった。物狂おしい心の迷を入り乱れさせてくれるな。給与も同業他社に比べるといい方だと思います。断酒会は『気づきの場だ』と言われます、本当に自ら「気づく」ことが本当に大切だと思いました。日本の領海に次々着弾する事態となった。御自分が死骸でいて、やはり死骸が食べたいのね。自分が先へ名告(なの)るが好(い)い。今でさえどれが自分か分からずにいるものを。探偵団の他の面々とは異なり平坂組では敬意を払われていない。 10月2日 – ラジオ深夜番組『歌え!皇居には約1時間半前後滞在し、途中から従妹にあたる愛子内親王も同席し、和やかな雰囲気だったという。

1877年から1878年の露土戦争の結果、3番目かつ現在のブルガリア国家が誕生し、1908年にオスマン帝国からの独立を宣言した。多くのブルガリア民族が新国家の国境の外に取り残されたため、領土回復主義の感情が高まり、近隣諸国との数々の紛争や、両世界大戦でのドイツとの同盟に繋がった。 2009年(平成21年)に誕生した民主党政権で最初の鳩山由紀夫内閣は、日米同盟を主軸とした外交政策は維持するものの、「対等な日米関係」を重視する外交への転換を標榜したが、普天間基地移設問題をめぐる鳩山由紀夫首相の見解が一貫せず、新しい外交政策の軸足が定まらず混乱、菅直人に党代表兼首相が移って、菅内閣では従前の外交路線に回帰した。

“【武田薬品】光工場がFDAから警告書-無菌処理工程で手順逸脱”.

“武田薬品に業務改善命令 誇大広告で初の行政処分”.

“【2011.3.11東日本大震災被災体験談】~あの時を振り返って~【1日目仙石線 仙台駅~野蒜駅そして・・・】”.薬事日報 (薬事日報社).独自のあるいは全国初となる一連のサービス深度化は、”収益にほとんど寄与しないとの大手証券アナリストの指摘”(2005年1月25日付日経金融新聞)もあるが、大垣共立銀行の狙いは”斬新なサービスによる知名度向上で、新規顧客獲得へつなげる戦略を立てている”(同)。

1990年(平成2年) – 協和銀行(現・寺崎英成を通じてGHQのウィリアム・ 1993年(平成5年)3月23日 – 女子バドミントン部を創部。 MetaStep(メタステップ)編集部は、前回の夏展に続き、同イベントの主催者RX Japan 国内営業部にて主任を務める川島拳大氏に、注目企業について話を聞いた。 4月1日 – 国立大学法人島根大学の主取引金融機関に指定される。

ウェバー社長は、糖尿病を含む代謝領域の研究を中止する方針を表明。 「がん」「消化器」「中枢神経」の3領域に経営資源を集中投入する方針。当社は対価としてテバ製薬株式会社(本社:名古屋市中村区)の株式を49%取得。同時に大正薬品工業は「武田テバ薬品株式会社」に社名変更。 4月15日 –

JCHBU事業分社化に向け、事業譲受の為の準備会社として「武田コンシューマーヘルスケア株式会社」を設立。

少しでも経済的な負担を軽減し安心してペットに治療を受けさせる為にペット保険は有効です。青色申告(あおいろしんこく)とは、税務署長の承認を受けて、一定の帳簿書類を備え付けて正規の簿記もしくは簡易簿記に基づいて帳簿を記載し、その記帳から所得税又は法人税を計算して申告することである。 もともと青色の申告用紙を使用して申告することからこの名があるが、2001年(平成13年)以降の所得税申告書は青色ではなくなっている。不動産所得・ かつては木材生産が盛んであり、高度経済成長期までに天然林の多くが伐採され、その後植えられた人工林が森林面積の大半を占める。

2007年7月31日の放送では、記念すべき100回目の放送と言うことで、有田が第一声で『祝!読売新聞 (2006年7月25日).

2009年12月24日閲覧。 これに伴い保険業法も1995年に全面改正され、保険料の自由化や第三分野保険の完全自由化、従来の保険外交員による販売チャネルとは異なる乗合代理店・

Vket(アルファベットで記載)」を使用して以降、略称はVketで定着した。世間を知らない青年教育者の口癖に言ふやうなことは、無用な人生の装飾(かざり)としか思はなかつた。丁度その一生の記念が今応接室の机の上に置いてあつた。 『基金令第八条の趣旨に基き、金牌を授与し、之を表彰す』とも書いてあつた。是主義で押通して来たのが遂に成功して–まあすくなくとも校長の心地(こゝろもち)だけには成功して、功績表彰の文字を彫刻した名誉の金牌(きんぱい)を授与されたのである。 なんだよ、このぎっしり文字。

一方、外国人労働者の長期在留、永住者増加を見込み、永住許可制度も見直します。演:若山直嗣

アメリカ人留学生。第二(亀山市) – 三重・ マレー語において /e/ および /ə/ の発音はローマ字表記ではともに e と書かれ区別されないが、ジャウィ文字表記の場合は前者を ي、後者を無表記として明確に区別している(詳細は「ジャウィ文字」を参照)。育成就労は受入前にN5レベルの日本語能力を求められ、受入後1年以内に技能検定基礎級合格、新たな制度から特能1号への移行は技能検定試験3級等または特定技能1号評価試験合格、日本語能力A2相当以上の試験(日本語能力試験N4等)合格が条件。

人間離れした身体能力を持ち、強い正義感を持つ熱血青年。 「福沢良」名義。成人式を迎えておらず、貴族の能力が未覚醒の状態だが、それでもユニオンの改造人間を圧倒する実力を持つ。 そこで、先生は、次の時代を担う日本の若者に、世界のどの舞台に立っても堂々と自分の意志で行動できる人になってもらいたい、という熱き思いで、大正14年(1925年)に本校を創立され、もうすぐ100周年を迎えます。

なお、『新スタートレック』の続編である『スタートレック:

ピカード』では初めてロミュラン星の崩壊後の世界が描かれたが、ロミュラン星の崩壊に至るまでの経緯などが微妙に異なる。

美しい若者が出て来る。 スペル~』 スポンサーに日清食品が名乗り出る⁈生(せい)の晴やかな道が受け取る。心底からしんどかったのか、先生に頼りすがるように処方の薬はその夜からやめました。通院と手術の限度額はそれぞれ年間補償限度額の20%まで、入院の限度額は60%までであれば回数無制限になります。 もともとは、スペースの限られた自宅の庭で「息子と手軽かつ安全に野球をするにはどうすればよいか?宮殿全体が歌っているかと思います。千家典子さまは、出雲大社という歴史深い神社の権宮司である千家国麿さんと結婚され、出雲の地で新たな生活をスタートされました。

」と発言(実際には振込遅延やカードの口座引落し不能、ATMトラブルなど多大な実害が出ていた)。

その中に、くりぃむしちゅーのオールナイトニッポンもラインアップされており、初回放送分から順次聴取可能となる。 アメリカでは、英国からの独立後、各州政府によって会社設立は、許可制となっていた。佐藤幸治『文化としての暦』創言社、1999年4月1日、225-227頁。 その後、規制が緩和され、自由化された。 それまで会社設立は、国王の権威のもとに行われていた勅許会社だったが19世紀には、自由化が目指された。株式は、オランダ東インド会社が最初と言われているが産業革命以降、新しい事業を起こすには、これまで以上に資本が必要になった。

手始めに北山と手を組んで悪事の片棒を担ぐ。物語開始時点で彼の乗機はなく、ボルクがピクシーに搭乗した後に彼のガンキャノンを使用する。時事ドットコム (2019年3月11日).

2019年8月22日閲覧。 「狂犬相良」の通り名で恐れられる開久ナンバー2の高校三年生(初登場時)。中学時代には絡んできた男を車道に蹴飛ばして車に撥ねさせるなど危険な話には事欠かず、仲間をして「ケンカの恐ろしさは智司さんより上」と言わしめる。 1990年代以降の女優活動は映画のみであるが、CM出演・今井が開久の生徒と起こしたモメごとがきっかけで三橋たちと喧嘩になり敗北して以降、彼ら(特に三橋)を執念深く狙う。

鈴木政権の後継を巡る党内調整では、福田が内閣総理大臣を務めずに自民党総裁のみを務めるという案が浮上したが、成案にならず総裁選に突入した。、元外務事務次官柳谷謙介が宮内庁と小和田家の仲介役を務め、1992年(平成4年)8月16日、柳谷邸にて徳仁親王と雅子が再会し、交際も再開した。

このとき、福田派は福田でなく安倍晋太郎を総裁候補として擁立し、福田は総裁争いの第一線から退く形となった。

JANIC 2017年度インターンを募集します【募集は締切りました。人が一度に理解できるのは数個の出来事だけです。製作幹事・政府は公共事業によって景気を下支えするべく財政黒字から財政赤字路線へと転換。 “「超(スーパー)お仕事大戦 バトル1」”.公使の接受に関する事務、皇室の儀式に係る事務をつかさどり、御璽・ 』

参加者限定特典Koi先生描き下ろしリーフレット情報公開!

2」開催決定!

実は「九条料理専門学校」の卒業生で、鱒之介に寿司の教えを受けていたが、当時は父親の跡を継ぐとは思っていなかったためあまり真剣に聞いておらず、そのことを後悔した事をばねに、彼の指導を直接仰ぐ時は熱心に成る。 3月26日 – 第58回選抜高等学校野球大会が阪神甲子園球場で開幕。西野七瀬公式サイト.

ネタで顔を弄られる事もあり「ジャイアント鈴カステラ」と女子達にあだ名付けられてからかわれたり、コアラの劇場支配人からは「顔が合わせ味噌」、ビラくばりしていた芸人トリオの1人(一匹)の紅一点のパンダからは「栗まんじゅうみたい」、西宮に「生姜と卵のそぼろ弁当」と言われる始末。

未承認の最先端の治療は、がんセンターなどで無償の臨床試験として、条件に合う治験者を募り行われる。次に、「免責金額」とは、補償の対象となるような損害が発生した際に、契約者が自己負担する金額のことをさします。

では、免責金額はどのように設定すべきでしょうか。双子曰く環の妄想に登場するハルヒに近いらしい。久美子の性格や言葉遣いからして彼女を最初は自身と同じく元ヤンキーだと思っており、第1話では「面白いのが来た」と発言していた(久美子の素性を初めて知ったのは最終回で彼女の素性が世間にバレて大騒ぎになった時である)。母親が和の国の元王女で、国王の姪ではあるがサクラ自身は王族外の立場。

早急に環状第二号線を開通させ、バス高速輸送システムBRTの運行を開始する必要があります。同時に、未開通の環状第二号線整備に着工します。解体と並行して東京2020大会の選手らを選手村から競技場に輸送する拠点となる大規模な交通広場を整備します。東京都のねずみ駆除に加えて、中央区独自の特別対策を実施し、未然に被害防止に努めます。将来の築地のまちづくりに関しては、東京都の築地再開発検討会議で方針を取りまとめています。

2013年にみずほ銀行で、暴力団への融資の存在を知りながら放置していた問題が判明したのを受け、金融庁は損害保険大手5社及び生命保険大手3社(東京海上日動火災保険、損保ジャパン、三井住友海上火災保険、あいおいニッセイ同和損害保険、日本興亜損害保険、三井生命保険、富国生命保険、太陽生命保険)を対象に、融資先の審査が適切に行われているか調査を実施したところ、いずれの会社も審査を信販会社に任せ切りにしており、事後審査も実施していなかったことが、一部マスコミの報道により判明した。

11月1日 初めての有料市営駐車場、相模大野立体駐車場が完成する。 また、鹿沼台地の上に孤立して残っている丘群もあり、これが茂呂山である。鹿沼アメダスにおける観測では、宇都宮市よりも平均気温は1℃程度低く、特に最低気温が低く観測されている。県都宇都宮市のベッドタウンという側面が強く、宇都宮市への通勤率は15.6%(平成22年国勢調査)。

“ダイエー「星置店」直営化でリニューアル開店”.

ダックビブレ – 2002年(平成14年)7月に減資によりグループを離れ、同年9月にさくら野百貨店となる。伯父同様、強力な霊能力(霊力)を有し、祓串を手に妖怪などをお祓いすることができる。 この場合、支払う手数料が最大3,263,478円となるので、手数料より利益が少ない場合、収益がマイナスになる可能性があります。公園でルヴァンカップの応援に行こうと言うアキラとロペと内田だが、ユニフォームが違う内田はペタンクのチビッ子大会の応援と勘違いしていた。

Wow! After all I got a webpage from where I be capable of truly

take helpful data regarding my study and knowledge.

「わからない」ではなく、利用者の実態を把握しようとしているのでしょうか。 これは、理論を学んでから実務に従事する場合と、逆に実務に従事し、必要に応じて理論を学ぶ場合とを比較すると、「初歩学習では双方の差はないが、独創性のある人間は後者から多く輩出する」との欧米での実験結果に基づいている。池末:それはすれ違いの議論だったと思います。 この結末には近所の主婦たちからは息子だけじゃなく親もアホと評されている。久美子の暴言を3Dの前で言い、熊井に胸倉を掴まれただけで呼吸が荒くなっていた。桜庭を人質に取り、以前よりも大勢の舎弟を引き連れて学校と久美子との「卒業するまで喧嘩しない」約束を守るために耐え続ける竜、隼人たち5人や後に駆けつけた3-Dの生徒全員にも一方的に暴行を加えたが、遅れて駆け付けた久美子と再度対決。

テトの後、放置されていたベトナム共和国陸軍(ARVN)は、アメリカのドクトリンを手本に拡大していった。 Inc,

Natasha. “「赤いナースコール」福本莉子が佐藤勝利の恋人役に、新キャスト12名一挙解禁(コメントあり)”.

マクナマラ国防長官は、1966年末には勝利を疑うようになっていた。

「大谷「投手有利の日」158キロ&初完封」『日刊スポーツ』2014年5月13日、2014年7月5日閲覧。米軍と南ベトナム軍は、航空優勢と圧倒的な火力を頼りに、地上部隊、砲兵隊、空爆を伴う索敵・

3歳以上の成犬の多くが歯周病にかかるといわれ、身近な病気です。身元保証のサービスを必要とする高齢者は多いが、事業者に対する監督官庁や規制はなく、国も実態はつかめていない。 1938年(昭和13年)5月10日 – 現在の中央道特急バスの前身となる名飯線名古屋 – 飯田間の急行バスを飯田街道(国道153号線)経由で運転開始。竹田美鈴(5歳・演じた金田明夫はその後第3シリーズに本城健吾の父親役として出演した。加島巧「プロテスタント宣教師 -幕末、明治、そして長崎-」『長崎外大論叢』第17号、長崎外国語大学、2013年12月30日、167-179頁。

津島線、岐阜市内線に冷房車を投入。名鉄バス高富線岐阜駅前 – 高富間にバス転換。中村を起点に郊外に向けて運行していた名鉄バスが名古屋駅前に乗り入れ開始。 「郡部線」当時からのターミナルであった押切町駅 –

東枇杷島駅間と柳橋駅までの市電乗り入れを廃止し、国鉄(現JR)名古屋駅前に地下線(駅)で乗り入れる。 1925年(大正14年)5月に設立され、現在の西尾線西尾駅以北などを運営していた碧海電気鉄道。

thank you nice job.nursingres

1998年:『競馬の血統学-サラブレッドの進化と限界』(吉沢譲治著)が1998年JRA賞馬事文化賞を受賞。 2010年:『ヤノマミ』(国分拓著)が第10回石橋湛山記念早稲田ジャーナリズム大賞文化貢献部門大賞を受賞。 2011年:『ヤノマミ』(国分拓著)が第42回大宅壮一ノンフィクション賞を受賞。一部報道では、典子さまが東京で開催されるファッションイベントに出席される様子が取り上げられ、その理由についてさまざまな推測がなされています。 2000年:NHKブックス『フェルメールの世界ー17世紀オランダ風俗画家の軌跡』(小林頼子著)が、『フェルメール論 ー神話解体の試み(八坂書房)とあわせ第10回吉田秀和賞を受賞。

AFPBB NEWS (2017年8月26日). 2017年8月18日閲覧。産経新聞社 (2017年8月19日).

2017年8月20日閲覧。産経新聞社 (2017年8月21日).

2017年8月21日閲覧。米CNN報道”. 産経新聞社 (2017年8月23日). 2017年8月27日閲覧。 “不明10人全員の遺体収容、米イージス艦衝突事故く”. “アイドルたちによる大喜利大会「おもカワ」マツリー杯、ダークホース登場で波乱の展開に”. “サウジ、カタール巡礼者に国境開放 外交危機緩和の兆し”. 2006年施行の預金者保護法において、ネットバンキングは保護の対象外となっていた。

仕事は決められたことだけやって、給料を好きなものに注ぎ込むことをモチベーションにするのでもいい。 ただし、この売上高の内訳については、2004年(65億円)が、放映権料収入(28億円)、入場料収入(20億円)、販売・出典:高円宮承子さまが性病感染で刺青を発覚…拡子女王 1915年(大正4年)7月18日 二荒芳徳との結婚に際し、授与。 “病院評価結果の情報提供”.

作戦にあたって小隊全機に貴重なビームライフルが支給されたが、アプサラスへの攻撃は事前に察知され、回避されてしまった。基地に帰還途中の公国軍潜水艦隊がこれを目撃し、本機の情報収集のため水陸両用モビルアーマー (MA)(グラブロ・ ニノリッチ伍長が搭乗し、アプサラス捕獲作戦に参加した。

警視庁0課の女刑事レイは、連続拉致事件の捜査に当たっていたところ課長からテロ集団により生物化学研究所が襲われたことを告げられる。冒頭で以前から若い男女を狙った連続拉致事件の捜査に当たっていたが、その後バイオテロ事件が起きたためしんじょうの指示でテロ事件の捜査を始める。 レイは課長から公安の女性捜査員・ 「俺」は偉そうに感じてしまう場合もあるため、同年代か少しの年上の男性なら「僕」がいいという意見もありました。次いで400万円以下の者が平均年齢約43.7歳、平均勤続年数約9.9年で5,016,891人(構成比約16.5%)、次いで600万円以下の者が平均年齢約44.2歳、平均勤続年数約14.4年で4,096,177人(同約13.5%)、次いで300万円以下の者が平均年齢約49.5歳、平均勤続年数約11.9年で3,314,288人(同約10.9%)、次いで700万円以下の者が平均年齢約45.6歳、平均勤続年数約16.9年で2,736,332人(同約9.0%)、次いで200万円以下の者が平均年齢約55.3歳、平均勤続年数約10.9年で2,173,

859人(同約7.1%)、となっている。

With havin so much written content do you ever run into any issues of

plagorism or copyright violation? My site has a lot of unique content I’ve either

written myself or outsourced but it looks like a lot of it

is popping it up all over the internet without my permission. Do you know any ways

to help prevent content from being stolen? I’d certainly appreciate it.

“「映画おしりたんてい シリアーティ」来年3月公開決定! “『宇宙戦艦ヤマト2205 新たなる旅立ち』Blu-ray & DVD第2巻 3月29日発売!最先端の治療は、未だ開発中の試験的な治療を指し、治療効果や副作用は未証明である。離婚理由は、DVや、長渕剛さんの母親と石野真子さんとの折り合いが悪かったことが要因だったそうです。 1:アジアのDAC援助受取国は、カンボジア、ネパール、バングラデシュ、東ティモール、ブータン、ミャンマー、モルディブ、ラオス、パキスタン、ベトナム、インド、インドネシア、スリランカ、タイ、中国、フィリピン、モンゴル、マレーシア。

40歳の時、先生と出会いました。

thank you nice job.clinicalmedicaljournal

You are so interesting! I don’t suppose I’ve truly read

a single thing like this before. So good to find another person with some original thoughts on this

subject matter. Seriously.. thank you for starting this up.

This web site is one thing that is required on the web,

someone with a bit of originality!

作詞・作曲は黒うさ、編曲は倉内達矢。作詞は谷山紀章、作曲・第2期第1クールで使用されたGRANRODEOによる楽曲。第2期第2クールで使用されたUruによる楽曲。編曲は飯塚昌明。作詞はUru、作曲は岩見直明、編曲はトオミヨウ。作詞・作曲は鈴華、編曲はkAi。 コーラルの調査書より。

海老沢 有道,大久保 利謙,森田 優三(他)「立教大学史学会小史(I)

: 立教史学の創生 : 建学から昭和11年まで

(100号記念特集)」『史苑』第28巻第1号、立教大学史学会、1967年7月、1-54頁、ISSN 03869318。女川町としても第二期復興連絡協議会が組成され、女川町の10年、25年後を見据えて、新たな挑戦が生まれようとしています。不思議な問答をするとは思つたが、丑松は其を聞いて、格別気にも懸けなかつた。 まあ、あの夢といふ奴は児童(こども)の世界のやうなもので、時と場所の差別も無く、実に途方も無いことを眼前(めのまへ)に浮べて見せる。

アメリカは植民地における民族自決に好意的な態度をとり、冷戦の中で共産主義陣営に第三世界の国々がつくことの懸念などが原因となって、「植民地独立付与宣言」などを経て植民地体制が国際社会で非難される中で植民地を手放さざるを得なくなった。家族は、互いの違いを尊重し合い、対等平等の民主的な関係の中で自己を確立し、セルフケア(自律)できるよう訓練し、援助しあうような関係でいることが 望ましいわけです。室町期の日本商人の発明に「見世棚(みせだな)」があり、15世紀当時の朝鮮では魚肉でも地べたに置いて売っていたため、この見世棚商法は当時の東アジアでは衛生面で画期的な商法であり、日本語における「店」(みせ)の語源ともなり、以降、日本では、店を「たな(棚)」「みせ(見世)」と読むようになった(詳細は棚の日本における見世棚商法、および店も参照)。

“<京都編> 新たな出演者を発表! 426 11月5日 新妻聖子 渋谷・自由電子報 (2019年9月7日). 2019年9月16日閲覧。 “Be water 《金融時報》:反送中將以「流水革命」傳世 – 國際 –

自由時報電子報” (中国語). “香港デモのスローガンは”Be Water” 革命の「水」はどこへ流れていくのか”. “這是我們的時代革命ㅤThis is our

Water Revolution | 林輝 | 立場新聞” (英語). 1934年(昭和9年)- 4月7日、日本銀行金買入法公布、政府の産金時価買入策の拡大。

thank you nice job.journalinsight

この提唱に対し、ECとアメリカはその内部事情により消極的姿勢を見せたが、第27回GATT総会以降、特に1971年12月のスミソニアン協定が成立した後、米国は一転して積極的姿勢に転じ、日本及びECそれぞれとの交渉の結果として、翌72年2月に至り、1973年にGATTの枠内において新国際ラウンドを開始する旨の宣言が日米および米・

江戸時代後期の壬生藩では蘭学が盛んに行われた。現代の壬生市街中心部を通る道路(かつての壬生通り=日光西街道であり、栃木県道18号小山壬生線の一部)には「蘭学通り」の愛称が付けられている。壬生藩の藩校は正徳3年(1713年)に鳥居家初代・藩名・旧国名がわかる事典.

来場を希望する場合、SteamからVRChatをダウンロードし、SteamアカウントまたはVRChatアカウントでVRChatにログインをする。出展を希望する場合、開催期間のおよそ4〜5ヶ月前に出展申し込みを行い、開催期間のおよそ1ヶ月前にはブースを入稿する必要がある。

2ちゃんねるの所有権裁判、東京高裁でひろゆきが逆転敗訴。友田 燁夫「高橋琢也と学生達(疾風怒濤の物語)(4)(下)」『東京医科大学雑誌』第69巻第3号、東京医科大学、2011年7月、321-348頁、ISSN 0040-8905。当時、VtuberとVRは同一の文脈で語られることが多く、Vtuberのファン層とVRのファン層は多くが共通していた。通常の「バーチャルマーケット」とは異なり、一から自分でブースを作る必要はなく用意されたセレクトショップのなから出展場所を選び、ひとつのアイテムを出展できる仕組みを採用。

本来は、現在で言う「新聞」を意味し、新聞紙条例、新聞紙法などの「新聞紙」はこの意味である。古新聞が黄色くなるのはこのためである。最終更新 2024年11月5日 (火) 13:26

(日時は個人設定で未設定ならばUTC)。趣旨に逸脱せず、また、偶然な事故を対象とする損害保険の本質や公共性に反しない限り、補償内容等を特約あるいは特約書により変更することが可能である。 コンストラクション」に商号変更。 このとき万歳の音頭をとったのは明治天皇の皇女である富美宮允子内親王(鳩彦王妃允子内親王)、泰宮聡子内親王(東久邇聡子)の御養育主任であった林友幸であるが、これは「その年の元日の参賀に一番乗りした人物が男性であれば、産まれるのは(将来の天皇となる)親王だろう」と女官らが予想していたところ、林が一番乗りを果たし、その後実際に親王が誕生したことを、彼が祝宴の間、自分の自慢話として話していて、それなら、と宮内大臣に音頭を取るよう促されたためだった。

つまり権力者は自身の言動や感情を冷静に見つめることが重要だと結論づけるのだが、先に述べた通り、彼らは悪びれてさえいないのが実際である。 4歳の頃から本名の吉田勝美名義で劇団こまどりの子役として活動。 『機動戦士ガンダム サイドストーリーズ』にリメイク版が収録されたが、自機がウルフ・

かつては宮室(きゅうしつ)とも呼ばれ、戦前(大日本帝国憲法・

【✨コラボ第一弾✨】いぎなり東北産 始球式への道!新衣装&初メイクに挑戦するMV制作のクラウドファンディング開始も”. “いぎなり東北産、10月にシングルをリリース “化粧禁止”から初のメイク&新衣装MV制作でクラウドファンディング開始”.音楽ナタリー (2020年10月24日). 2020年10月24日閲覧。 Pop’n’Roll (2020年9月1日). 2020年9月1日閲覧。 SPICE (2020年9月1日). 2020年9月1日閲覧。音楽ナタリー (2020年12月8日). 2020年12月8日閲覧。 スターダストプロモーションオフィシャルサイト (2021年4月8日). 2021年4月8日閲覧。 スターダストプロモーションオフィシャルサイト (2021年1月21日). 2021年1月21日閲覧。 HAYASHI INTERNATIONAL PROMOTIONS (2021年5月21日). 2021年5月22日閲覧。

thank you nice job.nursingres

thank you nice job.escientificjournals

『読売新聞』1984年7月6日朝刊第24面(『読売新聞縮刷版』1984年7月号p.234)および夕刊第20面(同前p.254)テレビ番組表に番組放送予定記載あり。 『読売新聞』199年7月10日朝刊第20面(『読売新聞縮刷版』1994年7月号p.490)テレビ番組表に番組放送予定記載あり。

『読売新聞』1987年7月3日朝刊第28面(『読売新聞縮刷版』1987年7月号p.124)および夕刊第20面(同前p.144)テレビ番組表に番組放送予定記載あり。 『読売新聞』1986年7月4日朝刊第24面(『読売新聞縮刷版』1986年7月号p.160)および夕刊第20面(同前p.184)テレビ番組表に番組放送予定記載あり。

第1に人口構造の急速な高齢化と今後の介護ニーズの爆発的増大予測(団塊の世代の後期高齢化や団塊ジュニアの高齢化が迫っている)、第2にサービス利用者の増大と介護保険財政の逼迫(利用者も介護保険費用も当初の約3倍、500万人で総額10兆円となり、保険料も上がり続けて現在は平均5千円を超えている)、第3に介護人材の不足(介護職は当初の55万人が現在約177万人と3倍増になっているが、離職・

デラべっぴんR (2021年10月2日). 2021年10月5日閲覧。 デラべっぴんR (2021年10月1日).

2021年10月5日閲覧。 10月31日 – 「株式会社クリスタル」(現ラディアホールディングス・ 「出会いはみくちゃんが一般人の時に私のDVD発売イベントに来てくれたんだよね」(川上)「ただのファンでした(笑)」(生田)【前編】”. “生田みく独占インタビュー! “【川上パイセンカウントダウン対談カウント7】生田みくとの1時間50分に及ぶガチンコ対談を大公開! “【川上パイセンカウントダウン対談カウント7】デビューしたら「2年早くデビューしていればよかったのに」って言われたんです。 “【川上パイセンカウントダウン対談カウント7】「チャンスが来たらすぐに掴む、掴めないのならば現状に対して幸せに生きられるようにマインドを変えるかだよ」【後編】”.以上、学生の皆様のご参加を心よりお待ちしております。

“日銀が最大の国債保有者に 3月末、大量買い入れで”.

47NEWS. 共同通信 (全国新聞ネット).久屋線 久屋町通

– 栄町 1909年2月 不許可 名鉄合併後の1978年8月20日東大手 – 栄町間開業。井手英策 『日本財政 転換の指針』 岩波書店〈岩波新書〉、2013年、4頁。小塩隆士 『高校生のための経済学入門』 筑摩書房〈ちくま新書〉、2002年、195頁。

スイッチを入れて投げるとカバーが開き、化学薬品の入った小型カプセルを撒き散らす。青森テレビ(ATV、JNN系列)の女子アナウンサーとのコラボレーション企画商品が発売された。暢子は気を取り直し、重子をアッラ・半径1.5m以内にプレイヤーが侵入すると発動し、電撃を放つ。電磁波の壁の中に入っていれば他人にも適応される。応募総数916名(229チーム)の中から選考を突破したチームが、それぞれ東京では13、大阪では11チーム参加しました。南町田グランベリーパーク2号店(東京都町田市) – 南町田グランベリーパーク内で営業する一般店舗とは別に存在する施設関係者専用店舗。

そう云う危険に取り巻かれて、まめやかな年を送るのだ。詰まり己達のために骨を折るのだ。会場で木の屋石巻水産とのコラボ缶詰を販売 @KinoyaCan (2021年12月17日).

“コラボ缶詰販売”. リーグ- 試合開始前に流れる観戦マナームービーに鷹の爪団が登場する。始てこれを享有する権利を生ずる。

1950年 – 1955年:白地に12本の青ストライプと赤文字でCarpの文字。 キリシタン学校では神学のほか,哲学,論理学,さらに天文学,数学,医学などの初歩も教えられていたと推測されており,半世紀余りの期間ではあったが,日本の文化に大きな影響を与えた。濡れた服を脱ぎ、乾かしながられいと交わした会話の中で、純は東京の定時制高校進学という選択肢を知る。

特定口座または一般口座で保有する上場株式等や、2023年以前のNISAやジュニアNISAを利用して保有する上場株式等を、2024年以降に開設されたNISA口座へ移管することはできません。 NISAでは、年間投資枠(つみたて投資枠120万円/成長投資枠240万円)と非課税保有限度額(1,800万円/うち成長投資枠は1,200万円まで)が設定され、NISA口座内の上場株式等を売却した場合、その売却した上場株式等が費消した非課税保有限度額の分だけ減少し、その翌年以降の年間投資枠の範囲内で再利用することができます。

中国・韓国路線も大連発の週1便を除き、すべて運休となっている。 2月13日 – 世界最初の月全体の地形図および重力地図が、国立天文台や国土地理院などの研究者チームにより作製されたと発表。実在のWBBAはタカラトミー内の開発部門を中心に、世界に向けて新しいベイブレードの開発と大会の開催を行うことを目的に発足された組織。一方外灘の対岸にあたる浦東新区には、1994年完成の東方明珠電視塔を始めとして、新しい摩天楼群が立ち並び、そのエキゾチックな景観、発展ぶりには目を見張るものがある。

上記の連邦祝日(federal holidays)以外に、大統領選挙翌年の1月に開かれる大統領就任式の日(1月20日、Inauguration Day)は、ワシントンD.C.とメリーランド州およびバージニア州の一部の郡の連邦政府職員のうち大統領就任式に関係しない者は、混雑(交通渋滞)を避けるために休日にするとされている。 「彼女は、新卒から入った会社の人間関係が悪く、体調を崩して退職したんです。調査のために飛んだF-15DJが白鯨の場所を把握するため、ペイント弾を放つ。小説版では描写に不詳部分があるが、漫画版では空中の新生龍に、アニメ版では地上へ落下した新生龍に対して掃射を加える。

アフリカでは、経済発展が著しいナイジェリアやガーナ、カメルーンなどで女子サッカーが少しずつ行われるようになっている。他に、女子チームとしてWEリーグ所属のサンフレッチェ広島レジーナを保有する。有効投票数254票中、1位票が253票、2位票が1票で、パ・ アルメニアとアゼルバイジャンはロシアとともに完全な停戦に合意し、ロシアのプーチン大統領、アルメニアのパシニャン首相、アゼルバイジャンのアリエフ大統領が午前0時に完全な停戦およびアゼルバイジャン領土の段階的回復を宣言する文書に署名したと発表。

友引町にある友引高校の2年4組の生徒。朝日生命保険相互会社(あさひせいめいほけん)は、相互会社形式の生命保険会社である。 すぐに、年子で子供ができ、楽しい結婚生活でしたがアルコールは毎日飲んでいました。 「がんばる」という言葉は、日本の経済成長一辺倒の象徴であるとし、「自然体に生きて行こうという意識の象徴」として「岩手はがんばりません」というスローガンを掲げた。 5日連続放送分を原作者のさくらももこが脚本を書き下ろしている。

2月3日 – 9日、ライブ映像作品『乃木坂46 1ST YEAR BIRTHDAY LIVE 2013.2.22 MAKUHARI MESSE』(2014年2月5日、ソニー・

インターネット版「官報」 平成29年12月13日付 第7163号 (独立行政法人国立印刷局).平成14年(2000年)から始まった社会保険制度の一つです。宇宙から飛来した寄生生命体。 ケーブルとは異なり、テクノウイルスに余分な能力を割くことも必要ないため「ケーブルの完全体」とも言える非常に強力なミュータント能力を持ち、ジーン・

中期に神戸市に編入された地域として垂水区・旧明石郡だった垂水区・ 2014年4月5日の下都賀郡岩舟町との編入合併に伴い、両市町の合併に先行して同年4月1日に岩舟町域の消防事務が佐野地区広域消防組合から移管され、佐野消防署東分署が栃木市消防署岩舟分署へ名称変更した。

流通ニュース. 株式会社ロジスティクス・運営が2013年11月1日にイオンモール株式会社に移管された。 ビッグ」「イオンスーパーセンター」「R.O.U」の直営売場でも利用できるようになり、一方でダイエーとグルメシティの直営売場でイオン商品券の利用が可能となった。 と”少子化による急激な人口減少”により社会保障制度が維持できなくなる可能性が現実味をおびているのです。 『あの–』とお志保は艶のある清(すゞ)しい眸(ひとみ)を輝かした。驚いたやうに引返して行くお志保の後姿を見送つて、軈て省吾を導いて、丑松は本堂の扉(ひらき)を開けて入つた。

thank you nice job.healthcareinsights

怪我人が出だし選手層の薄さから負けが込みだし、最終的には5球団全てに負け越しながらも借金15で5位に終わった。大野豊が42歳で史上最年長の最優秀防御率のタイトルを獲得したものの、順位は3位ながら中日以外の4球団に負け越して貯金は作れず、その後貯金を作ってのAクラス入りは2014年まで達成できなかった。 この大会が、経済面への波及効果も含め、日本と日本人が元気を取り戻す機会となることを、強く期待しています。 10月20日 – 「第11回 大阪まちなみ賞(大阪都市景観建築賞)」に於いて、「毎日放送本社ビル、クラレ・

例えば1巡目 – 10巡目の各巡にそれぞれ1回ずつ指名権を持つ、ぜいたく税対象球団が2人のQO拒否選手とMLBドラフト前に契約した場合、1人目の補償として2巡目および5巡目の指名権をまず失い、更に2人目の補償として3巡目と6巡目の指名権を失う。 レジーは色々世話になっており、娑婆でも影響力を持つ男。 この課題に対処すべく、中学生年代に於いて公式戦出場機会の少ない14歳(中学2年)以下の選手に対し、ハイレベルで拮抗した試合環境を年間を通してコンスタントに与える事で選手としてのレベルアップを図ると共に、アウェイへの遠征経験などを通じて社会性を育てる事を目的として2008年度から創設されたのが本大会である。

“空軍トップら500人裁判=クーデター未遂で最大規模-トルコ”.

“米海軍兵士、海自との共同訓練中に行方不明に 南シナ海”.

“アフガンでシーア派モスク襲撃、33人死亡 ISが犯行声明”.時間になると、『サンダーバード』に使われたカウントダウン(5,4,3,2,1というもの)のサウンドエフェクトが使用され、その後に同番組のバックミュージックとともにナレーターによる説明が行われる。時事通信社 (2017年8月1日).

2017年8月2日閲覧。時事通信社 (2017年7月31日).

2017年7月31日閲覧。時事通信社 (2017年8月2日).

2017年8月4日閲覧。 “タクシン派元首相に無罪判決=08年のデモ流血事件-タイ最高裁”.

thank you nice job.clinicalmedicaljournal

I visit everyday some websites and blogs to read posts, except this web site offers feature based content.

天は遠く濁つて、低いところに集る雲の群ばかり稍(やゝ)仄白(ほのじろ)く、星は隠れて見えない中にも唯一つ姿を顕(あらは)したのがあつた。青白い闇–といふことが言へるものなら、其は斯ういふ月夜の光景(ありさま)であらう。夜の靄(もや)は煙のやうに町々を籠めて、すべて遠く奥深く物寂しく見えたのである。 それにしても、今夜の演説会が奈何(どんな)に町の人々を動すであらうか、今頃はあの先輩の男らしい音声が法福寺の壁に響き渡るであらうか、と斯う想像して、会も終に近くかと思はれる頃、丑松は飲食(のみくひ)したものゝ外に幾干(いくら)かの茶代を置いて斯(こ)の饂飩屋を出た。 ステージなどでの挨拶の際には決めポーズとして、両手の親指と人差し指でL字を作り側頭部で掲げ、同時に舌を出す(画像参照)。

口頭では現示と進行・ メタバース展示会に出展する企業、自社でメタバースビジネスを始める企業などを支援する事業会社も増えています。

“初代やしろ顧問が応援LOCKS!の思い出を語る!”.

“2代目応援部顧問を務めた『よしだ顧問』が応援部に登場!!!!”.

“あしざわ顧問が受験した漢字検定2級の結果発表!!”.

“漢字検定2級合否発表!!”. “”結婚詐欺師”の異名を持つ”あの人”が登場!!!”.

“あしざわ教頭結婚おめでとう!! 人生初! 結婚お祝いスピーチ逆電!”.

その後、2005年(平成17年)の彼らの結婚について非常に喜んだ。

マーケットには例年800社、数千人の映画製作者(プロデューサー)、バイヤー、俳優などが揃い、世界各国から集まる映画配給会社へ新作映画を売り込むプロモーションの場となっている。審査員は著名な映画人や文化人によって構成されている。 「文名」の意味は分からないのに、「。 「且つ」は行為や事柄に対して、別の行為や事柄を付け加えて、「その上」「更に」という意味で使うことも可能です。中部国際空港アクセスの最速達列車で、2008年(平成20年)12月27日のダイヤ改正で新設された種別。

例としては、皇太子明仁親王などの英語教師を務めたヴァイニング夫人の勲三等宝冠章授章がある。保坂 芳男「キリスト教系中等学校の英語教員に関する研究 -立教学院の場合-」『人文・文部科学省ホームページ(認定・

“オバマ氏ツイートに史上最多の「いいね」 白人集会衝突受け投稿”.

“ブルキナファソで武装集団がカフェ襲撃、17人死亡”.

“パキスタンで軍の車両狙った攻撃、15人死亡 IS分派が犯行声明”.

“巨木が倒れ13人死亡 ポルトガル、宗教行事の人混み直撃”.

“洪水死者、400人超える=シエラレオネ”.

“コレラ感染疑い50万人超=イエメン”. “豪雨災害の死者1000人超に=救助難航、拡大の恐れ-印など”.

“土砂崩れで最大で250人死亡の恐れ コンゴ民主共和国”.

thank you nice job.peerreviewedjournal

thank you nice job.esciencejournal

口がないことが採用理由だったが、実際には問題なく喋れ、百目から気持ち悪いと言われたことに憤慨して怒鳴り散らしていた。魔女狩りの犠牲になった無実の少女の肖像画に宿る魂が実体化した悪魔。事業ごみ、消費税ゼロの怪 処理料で10自治体・ しかし、税金で設置された施設が一管理者によって私物化されるのを防ぐという観点からも、下記の項目などを地方公共団体の条例や協定書および仕様書などに盛り込んでいくことが必要となる。

近年の人材獲得競争の厳化や採用活動早期化の実態を調べてみました。 『世界年鑑2024』(共同通信社)386頁。弱いストレッサーによる歪みは、通常ならばストレッサーがなくなれば自然と丸く戻りますが、弱い力でも簡単に風船が破裂してしまう人もいますし、さらに強い力だと戻りきれなかったり、破裂してしまったりすることになります。龍の形の高価な靴も購入したが第1話でしか着用せず、以降は普通のブーツを着用している。宿場ごとに用意されている役人用の馬車に乗って商於(河南省)の境界までやって来た。

をはじめ、減給などの社内処分を実施するほか、再発防止策も10月中にまとめことを明らかにした。公式には長く未発表であったが、1975年に『地下室(ザ・株式会社みずほ銀行 (2021年6月15日).

2020年6月15日閲覧。皆年金の意義と課題」第47巻第3号、国立社会保障・

御交際なさるが好(い)い。 しかし実際無駄遣をしたわけでもありません。 ベンチャー企業への投資の会合で、美雪の兄・ 1992年、中国新技術創業公司(CVIC)との提携により、「北京賓特購買中心(北京八佰半百貨店)」を開業。英では、医療、金融、住宅等のサービス提供者に滞在許可の確認を義務付け、不法滞在者の利用を制限して滞在を困難にすることで自発的帰国を促しています。

彼は朱子学の立場から儒学の基本である修身・東京会場では2月13日~15日、大阪会場では20日~22日に実施。 ▼築地東通りは日本酒、ビール、ワイン、抹茶の飲める店が所々にあって和みます。 1月20日 – 大阪市の駒川店で火災が発生、地下売り場が全焼。 1月 前述の敷地内で、テレビ送信用のタワー(地上高177m)が完成。 ごま油をオリーブオイルに代えると洋風に仕上がります。

I am not certain the place you are getting your info, but good topic.

I needs to spend a while studying much more or working out

more. Thanks for great info I was looking for this information for my mission.

母親も英語の達人であることから、嗣子様も英語力を身につけているでしょう。県内の農林漁業者及び食品製造業者が製造する県産農林水産物を使用した加工食品の魅力を発信するとともに、販路の開拓・

“米政府の個人監視に逆らえないテクノロジー企業”.

Правительство Сахалинской области(サハリン州政府).

河原の砂の上を降り埋めた雪の小山を上つたり下りたりして、軈(やが)て船橋の畔へ出ると、白い両岸の光景(ありさま)が一層広濶(ひろ/″\)と見渡される。雑学からトレンドまで幅広い知識を持つ物知り系サラリーマン。丑松は其広告を読んだばかりで、軈てまた前と同じ方角を指して歩いて行つた。一生のことを思ひ煩(わづら)ひ乍(なが)ら、丑松は船橋の方へ下りて行つた。鈴木勇一郎「立教学院の校友組織と寄附行為」『立教学院史研究』第15巻、立教学院史資料センター、2018年1月、93-114頁。同様に統括店である横浜店ではなく、日銀横浜支店に近いゆうちょ銀行横浜港店と接続されている。

エスカレートさせたりする危険性もあります。法令等の定めにより、当行が介護保険の募集を行う際には、お客様が「銀行等保険募集制限先」に該当されるか否かについて等の確認をさせていただきます。 また、この段階のストーカー行為は警察が介入できるほど事態が深刻化していないことが多いため、ご自身で有効な対策を講じなければストーカー行為が収まらないという点で厄介なパターンです。良識的な感覚を持っている相手の場合には、あなたからこのような連絡が来た段階で、「これまでの自分の行動が相手に迷惑をかけていたのではない」かと内省し、ストーカー行為が終了するという可能性があります。

加えて、業界全体で業務縮小が一斉に行われたために派遣元企業は他への紹介が出来ず、結局のところ派遣元企業も派遣社員に業務をあてがう事が出来ずに契約解除することが広く行われた。毎年5月に行われている鉄道のまち大宮 鉄道ふれあいフェアなどを始めとする車両基地・ SPとして無表情で厨房に立っていたが、「柔道八段」の実力を活かし開かない瓶の蓋を開け、稲毛のデザートの完成に貢献する。水産研究所を改組し、海洋研究所を開設。

だが、人材確保や研修の充実、家族支援などに必要な予算が確保されなければ、実効のある対応は期待できない」(高崎絹子・ 2008年12月にユニマット山丸証券株式会社の事業を一部承継し、2008年12月1日より現在の名称となり、後に村上豊彦が社長に就任したため、一時期役員から創業家がいなくなった。上記の理由から、レバレッジ型、インバース型のETF及びETNは、中長期間的な投資の目的に適合しない場合があります。

“[ドラゴンボールZ]新作劇場版の声優に山寺宏一と森田成一”.

“. 映画『聖闘士星矢 LEGEND of SANCTUARY』. “STAFF&CAST”. 映画『SHORT PEACE』オフィシャルサイト. “『峰不二子という女』から2年–アニメ『LUPIN THE III RD 次元大介の墓標』2014年初夏、新宿バルト9にて期間限定で特別上映! “マスコミの取材マナー悪さ ネットで指摘が相次ぐ”.

ロペとアキラが通う高校の先生。特別約款で定める保険事故が発生した場合に保険金が支払われる。

幼少期はひょうきん者でおちゃらけていたが、成人後は寡黙かつ真面目で誠実な青年に成長する。上品に口許に手を当てそう微笑む卯ノ花隊長。 1944年(昭和19年)2月 – 東京火災海上保険株式会社・

2022年10月17日午前、午前9時半ごろから法人の振り込みや取引明細の照会などを扱う「みずほビジネスWEB」などで不具合が発生し、一部の法人向けのインターネットバンキングにつながりにくくなるシステム障害が起きた。二つの教会をめぐる石の物語 『人物略歴5 ウィリアム・

コロンビア、アンティオキア県メデジン郊外にあるペニョル貯水池(スペイン語版)にて150人を乗せた遊覧船が転覆。 6月26日 – シリア南部マヤディーン(英語版)の刑務所を有志国連合が空爆。 ウクライナ政府機関や銀行、市役所などが標的にされ、被害件数は欧州を含めて2000件に昇った。 メキシコ、ゲレーロ州アカプルコの連邦刑務所で、受刑者グループ同士の抗争から暴動が発生。行動的で、興味を持ったことにはなんにでも首をつっこむ性分。修行の際は鱒之介から、普段の孫煩悩とは一変して厳しい指導を受け、容赦の無い叱咤を受ける(父の旬もその際はみどりが自分で選んだ道だからと敢えて助け船も出さず冷淡な姿勢を取っており、藍子にも決して鱒之介に口出ししないよう釘を刺している)が、決して音を上げず食らい付いている。

thank you nice job.enursingcare

彼様(あゝ)いふ調子で、ずつと今迄進んで来たら、奈何(どんな)にか好からうと思ふんですけれど、少許(すこし)羽振が良くなると直(すぐ)に物に飽きるから困る。同社が中国など数多くの国に進出した結果、設立からわずか6年で、ホテル数で世界第2位に成長しました。

1958年(昭和33年)には当選4回ながら自由民主党政調会長就任。 その理由を、経済ジャーナリストの岩崎博充氏がこう話す。渋沢栄一から学ぶ経済(?

さらに、OYOがヤフーと組んで「レオパレス」を救済するとの報道もあります。 ガーディナー(立教学校初代校長)をはじめ、聖公会神学院の前身の一つである聖教社神学校を設立したアレクサンダー・

“【コラム】諏訪魔、大森隆男、和田京平、木原リングアナ、神林レフェリーの証言で綴る、全日本プロレス『10年目の』東日本大震災”.福田 赳夫(ふくだ たけお、1905年〈明治38年〉1月14日 – 1995年〈平成7年〉7月5日)は、日本の政治家、大蔵官僚。日本放送協会 (2023年3月12日).

“WBC 侍ジャパン 佐々木朗希「何か感じてくれたら」東日本大震災12年 | NHK”.

】第8回「東日本大震災を経験して痛感したこと、『ALL TOGETHER』での内藤哲也、棚橋弘至との遭遇などについて語る!

“エジプト軍がクーデター、大統領を排除 憲法停止宣言”.

“デトロイト市が財政破綻、米地方自治体として過去最大規模”.

“パナマ当局が北朝鮮籍の船舶を拿捕、砂糖のコンテナから武器”.

“キューバ「北で修理」 拿捕船舶から武器、釈明”.行方不明者5700人の死亡宣告=インド北部の豪雨 時事通信 2013年7月15日閲覧。検索結果は、しばしば予備報告を伴う場合があります。

当社は、業務提携する株式会社ビーライズの出展ブース内で、バーチャルイベントを簡単に開催できるプラットフォーム「メタバース展示会メーカー」をはじめ、自治体でのメタバースの活用事例などをご紹介します。自叙伝、身辺雑記、俳句と、その人柄と魅力のつまった構成になっている。演劇、映画、放送、文壇、俳壇、画壇などなど、幅広い交遊関係がうかがえる。日本画、篆刻、俳句、酒と風流な趣味人であった。喜多村緑郎の犬好き、初代水谷八重子のおもちゃのいたずら、大矢市次郎の釣り狂い、フランキー堺の着物道楽、八代目市川中車の腹話術と漫談などなど、俳優仲間の趣味と素顔を軽妙に綴っていく。

身位を官報掲載どおりに記載(括弧内に現在の宮号等を参考付記)。同日付で勲一等宝冠章を受章した。刑部芳則「明治時代の勲章外交儀礼」『明治聖徳記念学会紀要』、明治聖徳記念学会、2017年。外国人叙勲受章者名簿 平成29年 – 外務省、2019年8月18日閲覧。 ご会見(平成28年) – 宮内庁、2019年8月18日閲覧。

水曜劇場 チャンス!水曜劇場 お金がない!金曜ファミリーランド 1億2,500万人のクイズ王決定戦!第7回FNS1億2,500万人のクイズ王決定戦!

オールスター爆笑ものまね紅白歌合戦!車両保険をセットする場合は、免責金額を設定することで保険料をおさえられますが、万が一の事故の際に想定していた補償が受けられない、といった事態を避けるためにもきちんと理解しておきましょう。保険料は所属員が自分で負担する。大学1年生の時にオーディションを受け、ピン芸人として吉本興業へ所属。生さんま

みんなでイイ気持ち!

安堵するも、山田にとってその小麦粉がいかに大切な物であったかを智司に諭され、猛省する。 そのことで他校との決闘に参加するも、同年代の生徒が敗れるのを見て恐れをなして逃げ出す。

その時は「二度と仲間を裏切らぬようケジメをつけさせる」と伊藤を気追す。直後、駆けつけた山田と仲間に刺されそうになるも、三橋が「コイツもタコ焼きを作りたかったんだ」と庇ったことに口裏を合わせ和解した。三橋を卑怯者呼ばわりしたことにキレた伊藤に一撃で敗れる。 それを見て激怒した伊藤が番長兄弟を倒したため事なきを得たが、伊藤の気迫に驚愕し距離を置くようになる。

今でも築地市場の場外で営業するお店からしたら、正確には、移転したのは、場内市場の方であり、築地場外市場は今でも残っていると言う人は多いかもしれません。 たゞ一際(ひときは)目立つて此窓から望まれるものと言へば、現に丑松が奉職して居る其小学校の白く塗つた建築物(たてもの)であつた。丑松が転宿(やどがへ)を思ひ立つたのは、実は甚だ不快に感ずることが今の下宿に起つたからで、尤(もつと)も賄(まかなひ)でも安くなければ、誰も斯様(こん)な部屋に満足するものは無からう。野々下加津も第9シリーズ以降は以前と比べると口数が少なくなり影が薄くなっていて、第10シリーズは事実上レギュラー降板しイレギュラーの形での登場となった。

高円宮承子さまはいくつかの大学に在学、留学をしていますが男性との出会いがあるのは3箇所。旧皇族や旧華族階級でない、いわゆる平民家庭出身の母親であった皇太子妃美智子の意向に沿い、懐妊に際しては母子手帳が発行され、皇居宮殿内の御産殿での出産をせず一般家庭と同様に病院で行うなど、それまでの皇室の慣例によらない、戦後初の内廷皇族の親王(皇孫、皇太子の長男としてほぼ確実に将来において天皇に即位することが確定している男性皇族)誕生は、広く国民に注目された。

I was wondering if you ever considered changing the page layout

of your blog? Its very well written; I love what youve got to say.

But maybe you could a little more in the way of content so people could connect with it better.

Youve got an awful lot of text for only having one or 2 pictures.

Maybe you could space it out better?

“リーグ優勝9回にちなんで9つの赤を採用 アパホテルがカープロードに『広島駅前スタジアム口』を開業 新たに駅前の老舗ホテル取得も明らかに【広島・新スタジオ及びマスターの運用開始は1週間後の12月31日からで、それまでは従来の旧本社(2013年12月23日まで)からの放送であり、旧本社最終番組は12月30日 22:30 – 24:00に生放送された「さようなら日本自転車会館スペシャル」(司会:中野雷太・

職人気質一本気な伴とは対照的に、社会人として不慣れな新人たちに酒を驕ったり風俗につれて行くことで懐柔し、パスタ場に反バンビ派閥らしきもの形成するほど世間慣れしている一面もある。但馬銀行は、募集代理店として契約の媒介を行いますが、契約の相手方は引受生命保険会社となります。補償割合は70%となっており、年間補償限度額は30万円となります。 プラチナプランの中でも都合に合わせてさらに3つのプランから補償割合を選ぶことが可能となっています。手術をトータルでカバーしてくれるプランとなっているため、充実した補償内容となっています。

パールプランは高額になりがちな手術のみを補償することで、月々140円〜という安価な保険料に抑えることができるプランとなっています。

銀之助が友達を尋(さが)して歩いた時は、職員室から廊下、廊下から応接室、小使部屋、昇降口まで来て見ても、もう何処にも丑松の姿は見えなかつたのである。乃木坂46運営委員会.

ひたすら登り切り、やっと水越峠の下り坂へ、今わしは酒をやめる為に四條畷断酒会、出席するぞとママチャリ走らせ、地図の上でしか見たこともない「有田川」を今、渡っている。斯うして車の後に随(つ)いて、とぼ/\と二三町も歩いて来たかと思はれる頃、今迄の下宿の方を一寸振返つて見た時は、思はずホツと深い溜息を吐(つ)いた。悉皆(すつかり)下宿の払ひを済まし、車さへ来れば直に出掛けられるばかりに用意して、さて巻煙草に火を点けた時は、言ふに言はれぬ愉快を感ずるのであつた。

父親と浜茶屋「海が好き」を営んでいたが、その浜茶屋が壊れてしまったため、再建費用を稼ぐために親子で友引高校の購買部で働くようになる。旧興銀の業務を引き継ぎ、旧興銀店舗またはその承継店舗では、金融債の「割引みずほ銀行債券(ワリコー)」「割引みずほ銀行債券保護預り専用(ワリコーアルファ)」「利付みずほ銀行債券(リッキー)」「利付みずほ銀行債券利子一括払(リッキーワイド)」を発売していたが、2007年(平成19年)3月後半債(3月27日)で発行を終了した。医療保険においては病気のリスクの少ない若年層のオッズは高いが、年配者の場合はオッズは低く、逆に自動車保険においては事故率の高い若年層の方がオッズは低くなる。

介護保険制度は、国民の老後生活を支える重要な制度のひとつです。同月2日、非合法極左組織「革命的人民解放党・ ※年齢、免許取得年数により加入に制限がある場合がございます。総合化学調味料「いの一番」を発売。 「情報ファイル ダイエーがLP発売」『朝日新聞』1973年12月6日付東京朝刊、6面。

スケレシュースキー(英語版)が聖ヨハネ書院として設立した中国初の高等教育機関で、東方のハーバード大学と呼ばれた。

ニューマン氏と同氏の不動産新興企業フローの担当弁護士は書簡で、ウィーワークから十分な情報が得られないため、買収案を策定する取り組みが滞っているとして不満を示した。内閣総理大臣 安倍晋三『内閣衆質一九〇第一二九号 衆議院議員大串博志君提出諸外国における一階部分の年金積立金の運用状況に関する質問に対する答弁書』(プレスリリース)衆議院、2016年2月23日。第3女子 –

絢子女王〈あやこ〉(1990年〈平成2年〉9月15日 – )- 日本郵船社員(NPO法人国境なき子どもたち理事)・

“いぎなり東北産 ツーマンライブ「いぎなり東北産(様)×桜エビ~ず 仲良くなんてできないよ! “桜エビ~ず ツーマンライブ「いぎなり東北産(様)×桜エビ~ず 仲良くなんてできないよ! “仲良くなんてできないよ!桜エビ~ず×いぎなり東北産、3度目の熱戦の行方は”.

“エビ中、クラスメイト総勢135人で作り上げた4年目の「秋田分校」”.

“いぎなり東北産 私立恵比寿中学秋田分校に出演! ストーリーモード以外に「コロシアム戦」やストーリーモード内の「フリーバトル」などの1人で楽しめるモードに加えて、DSワイヤレス通信で他のプレイヤーと対戦できる「通信対戦」、ベイブレードのゲーム作品では初となるネットワークによる対戦が収録されたニンテンドーWi-Fiコネクションに接続して世界中のプレイヤーと対戦できる「Wi-Fi対戦」がある。現在、展示会への出展者を募集中です。

ある猟師によると、その日本人はとんど裸の状態で、罠にかかったシカを一心不乱に食らったそう。植えたトウモロコシは疾病で実がなっておらず、食糧が逼迫した状態になってしまったため、ウィリアムはケイレブの手を借りようとしたのです。音声配信以外にも、ホームページのブログ化に精力的に取り組んでおり、各番組ごとのブログは勿論の事、特別番組(外国の競馬実況特別番組ほか)ブログや、制作スタッフの単独ブログ(インターネット担当者webmasterブログ、大阪支社アジア番組担当営業マンブログ、競馬番組担当者によるワールドカップ中継PRブログを引き継いでの日本サッカーウォッチブログ)なども、公開されている。

十世坂東三津五郎”.月刊演劇界増刊号|平成元年/1989年11月30日初版発行『歌舞伎俳優名鑑』P.123 坂東三津五郎家の家系図:八世三津五郎の長女 守田喜(のぶ)子 (昭7・7・22生)と記載あり。

その年をもって家事のコーナーは廃止され、2003年度からはほぼ料理全般の番組となった。 どきドキキッチン』と改め、初めて番組名にサブタイトルが付けられた。番組は放送開始当初のほぼ料理のみの内容のスタイルに戻し、各地の幼稚園を回って行われるクッキングクイズコーナー「クックのはてな? わんパーク』に2度ゲスト出演し、番組MCの平田実音(ミーオ)と共演した。本番組でのネタやパロディが何度か使用された。 2002年度から番組タイトルが『ひとりでできるもん!伊倉は後に2004年から同じ教育テレビの『天才てれびくん』でてれび戦士として2006年度まで3年間出演。車両の荷台には、銃を持った兵士や、同じくスマートフォンで撮影している兵士も映っていた。

また、それまでのシリーズでは1年ごとにオープニング映像が一新していたが、このシリーズから2年間同じオープニング映像に統一された(次のシリーズも同様)。

1984年(生物科学) – ダニエル・ 1988年(数学) – ピエール・ 1994年(数学) – サイモン・ 1982年(数学) – ウラジーミル・中央競馬実況中継は、前述の携帯向けライブ配信のほかに、実況部分のみを、パソコン向けにも、有料で配信している(外部リンクを参照)。

晩年の乃木は学習院院長を務め、少年時代の迪宮裕仁親王(のちの昭和天皇)にも影響を与えた。当初は辞退やむなしの意向だった久邇宮家は態度を硬化させ、最終的には裕仁親王本人の意思が尊重され、1921年(大正10年)2月10日に宮内省から「婚約に変更なし」と発表された。文頭は「今回本紙は、○○(テレビ番組)の司会を務めた、上田晋也の動きに注目した。本庄繁に「美濃部説の通りではないか。 1935年(昭和10年)、美濃部達吉の憲法学説である天皇機関説が政治問題化した天皇機関説事件について、時の当事者たる昭和天皇自身は侍従武官長・

“Meta、経済産業省、バーチャルライツがメタバースに求められる新しいルールの在り方についてパネルディスカッションを実施”.

“NPO法人バーチャルライツ – 国際連合経済社会局iCSOに登録承認されました”.

“地方創生SDGs官民連携プラットフォームに「メタバース分科会」を設置”.指定管理者は、毎年度終了後、その管理する公の施設の管理の業務に関し事業報告書を作成し、当該公の施設を設置する普通地方公共団体に提出しなければならない。 “NPO法人バーチャルライツさんと意見交換をしました – 参議院議員 山田太郎 公式webサイト”.

“NPO法人バーチャルライツ、メタバース振興に向け国会議員へ提言”.

第1シリーズと同じく放送期間が4月から6月まで(ただし第1シリーズは放送期間が4月から7月上旬までとなった)となり、そのため物語は第1シリーズと同じく1学期開始当初からスタートし、そして1学期終了直前の所で最終回を迎えて一旦終了した。大手損保は2015年、火災保険の最長契約期間を住宅ローンの期間にあわせた36年から10年に縮めていた。他の作品ではレイの攻撃はほとんどが拳銃によるものだが、本作では銃の他、徒手や鉄パイプなどを巧みに使い戦うシーンがある。 その頃一番辛くて恥ずかしかったのは買い物に行って精算をする時に、手が震えて小銭を財布から出す事ができなかった事や、買い物した袋だけを持って店に財布を置いてくる始末でした。

7月 – 官民競争入札等監理委員会委員に就任。民主友愛太陽国民連合・残業自体はほとんどありませんが、お客様の都合によっては残業もあります。 」と告白し、様々な人種と肉体関係を持ち続けている現在が明らかになっている。転勤の頻度も恐ろしく早いので担当顧客との信頼関係を作る暇も無し。平日は早朝から夜中まで顧客の迷惑考えず電話攻撃。

免責金額を設定している場合、支払われる保険金は以下の通りです。免責(事由)とは、保険金が支払われない条件を示すものです。 お客さまの中には「免責(事由)」と「免責金額」を同じものと考えている人がいます。 あなたの火災保険の免責金額は5万円としましょう。火災保険に加入する際や保険金を請求する際は免責事由をよく理解し、不正行為に加担しないよう注意しましょう。 これは詐欺行為であり、損害保険協会や国民生活センターはこのような業者の提案に注意するよう呼びかけています。

2014年(平成26年)5月27日午前、出雲大社禰宜(当時)の千家国麿との婚約が内定したと宮内庁の西ヶ廣渉宮務主管が記者会見で発表。靴磨きをしていたすずを見かけ、学校に行く時間だと声を掛けるが泣き真似をされ悪者扱いを受ける。 2006年には、新座キャンパスに現代心理学部・ ユンによると、士官学校時代から持っているらしい。 「前近代の「御料車」-牛車と鳳輦・

I always used to read paragraph in news papers but now as I

am a user of web so from now I am using net for articles or reviews,

thanks to web.

“地震被害対策本部を設置しました”.太平洋沖震災対策本部」を設置。 “東北地方太平洋沖地震対策本部設置”.

“. 穀田恵二 (2011年3月11日). 2015年3月21日閲覧。上田に滑舌の悪さをイジられた。 “【党首声明】東北地方太平洋地震で被災された皆さまとすべての国民の皆さま”. “東北地方太平洋沖地震による災害への救援を訴えます”. “日本共産党は、志位和夫委員長を本部長とする「東北地方・

時事通信社 (2017年7月5日). 2017年7月7日閲覧。

CNN (2017年7月1日). 2017年7月8日閲覧。 AFPBB NEWS (2017年7月1日).

2017年7月4日閲覧。時事通信社 (2017年6月30日).

2017年7月4日閲覧。 AFPBB NEWS. 30 June 2017.

2017年6月30日閲覧。 29 June 2017. 2017年6月29日閲覧。時事通信社 (2017年7月4日).

2017年7月7日閲覧。時事通信社 (2017年7月3日).

2017年7月4日閲覧。 ジャワ島”. AFPBB NEWS (2017年7月3日). 2017年7月4日閲覧。 AFPBB NEWS (2017年7月3日). 2017年7月4日閲覧。

これによると、38社の不払い合計は、件数にして131万件、金額にして964億円に達し、同年4月の中間調査結果と比較しておよそ3倍に膨れ上がった格好となった。千家典子さまの名前は、父である高円宮憲仁親王によって命名されました。 『荻原博子の家計簿クリニック ムダを省いてお金を浮かす』扶桑社(Odaiba!

このため、2007年2月1日に金融庁が日本の全生命保険会社(38社)に対して、2001年~2005年の過去5年間に行われた保険金不払いの件数や不払い合計金額を調査し、2007年4月13日までにその調査結果を報告するように命令した。

5月12日 – 横浜住吉町四丁目店(横浜市)の店内に「千葉県アンテナショップ」開店。玉を中住まいにして自陣左右に金を開くように配置した陣形。 2月29日 –

KDDIとWi-Fiスポットの構築を中心とする業務提携を締結。黒毛和牛を甘めの割り下で煮込んだ「別格」の三種類の牛皿がメニューの中心だった。千家典子さまが東京に頻繁に足を運ばれるとしても、それが夫婦間の不和を意味するものではありません。 ポプラ米子西河崎店」「ローソン・

スリーエフ」に転換するとともに、スリーエフから当該店舗の資産・

女性セブン2011年4月14日号『天皇皇后両陛下、「国民と共に」と拒まれた特別室 -食事も一汁一菜に-』。産経新聞2011年4月29日記事によると、「防犯や防災など安全に関わる部分の電源」「天皇・株式会社ヤマトヤシキ”と表記されており創業の地である姫路市を強く意識していることが窺える。筆頭株主が三菱商事に移ってから、間もなく募集が停止された。日本民間放送連盟賞/2011年(平成23年)テレビ教養番組・

天童の親方の一人息子で、みどりの同級生(クラスは別)。 その後、姉の能力の芽生えと共に魔女狩りに遭っていたところをマグニートーに助けられ、姉と共に「ブラザーフッド・運輸関連などが上昇した一方、電気・ なんだか前途の幸運が予想せられる。 1939年(昭和14年)9月1日 –

1902年(明治35年)3月に設立され、現在の瀬戸線を当時運営していた瀬戸電気鉄道を合併。

株式会社岩手ホテルアンドリゾート – 2003年3月加森観光に全株式を譲渡。乃木坂46公式サイト

(2018年9月20日). 2024年2月15日閲覧。 2012年 阿部佳乃(

– 2014年、佐渡テレビジョン出身、→フリー、現・浩歌は一部のメディアと視聴者の反応に納得がいかず「この二人、この騒動を気に有名になったんですよ。 わたしのこの爪は達者ですからね。、若い世代からその世代間不公平について寄せられる批判も多い。 わたし達の仲間にいて、好い気持はしないわ。

早くに両親を亡くし家族が欲しいという思いから、その女の子を家族とみなし、結婚の約束をしたが後に転校してしまい、今ではどこにいるのかもわからなくなっている。 とくに年度初めの4月号は出版社、ラジオ受信機、電子辞書、語学学校の広告で他の月より一際分厚い。 ルドニークの街で助けられてからくまゆるとくまきゅうがお気に入りだが、そのことを恥ずかしがっていて、自分ではそうと認めていない。 ユナの実力を認めており、自分よりも強いとはっきり公言している。 クリモニアや王都、デゼルトなど、様々な土地で活動している。 1996年(平成8年)6月3日に火山活動の終息が宣言され、それ以降は現在まで復興へ向けた取り組みが続けられている。

『今回のことは、教育者に取りましても此上もない名誉な次第で、非常に私も嬉敷(うれしく)思つては居るのですが–考へて見ますと、是ぞと言つて功績のあつた私ではなし、実は斯ういふ金牌なぞを頂戴して、反(かへ)つて身の不肖を恥づるやうな次第で。是主義で押通して来たのが遂に成功して–まあすくなくとも校長の心地(こゝろもち)だけには成功して、功績表彰の文字を彫刻した名誉の金牌(きんぱい)を授与されたのである。

ただし、保険契約者又は被保険者の詐欺又は強迫を理由として損害保険契約に係る意思表示を取り消した場合や、生命保険契約が遡及保険により無効とされる場合は、保険料返還義務を負わない(保険法第64条)。

第5話で登場した沙織に付きまとっていたストーカー。第二の鷙鳥(一層濁れる声にて。第一の鷙鳥(濁れる小声にて。 この土地に慣れるのは、大ぶやさしそうだぞ。訪日中の米国大統領ドナルド・ この年の大統領選挙では民主党のジョー・ 26日、大阪城ホール)、東京(30日・

丑松が汽車から下りた時、高柳も矢張同じやうに下りた。 』と見ると、高柳は素早く埒(らち)を通り抜けて、引隠れる場処を欲しいと言つたやうな具合に、旅人の群に交つたのである。 (現在の都立千早高校の場所)。女子系の12の公私立大学はひと足先に48年),さらにこの年に大学に入学した者が卒業する53年に大学院がそれぞれ発足したのである。学生時代は応援団だった。権勢と奢侈とで饑(う)ゑたやうな其姿の中には、何処(どこ)となく斯(か)う沈んだところもあつて、時々盗むやうに是方(こちら)を振返つて見た。

民営化前を含むそれ以前の加入通知では、全銀システム用の口座番号はもとより、カナ表記がなされていなかったが、全銀システムの関係上、カナ表記を割り当てる必要があったため、サービス開始前に全銀システム用の口座番号が圧着ハガキで通知されることになった際、カナ表記も通知され、特に法人名に関しては、表記変更が必要か否か(「カフ゛シキカ゛イシヤ」を「カ)」などの略称標記にする必要があるかなどを含む)の確認(変更の場合は、要届出となった)も要請されていた。放映され、全38話の映像が現存している。 1968年には映画としても製作されているが、主役の池内淳子と山岡久乃と長山藍子の3人は共通して同一のキャスティングで出演している。

しかし燃費性能は非常に悪く、装甲内部に増設された大型推進剤タンクを用いても稼働時間は短い。 『アニメージュNEXT

2013 winter』徳間書店〈ロマンアルバム〉、2012年12月、pp4-37頁。青い目の少年兵 -知られざる日中戦争の物語- (2011年8月13日/19日、NHK

BSプレミアム、構成・今年の夏、エンジニアのスペシャリストとして、またはゼネラリストとして、活躍したいと考えている学生に ドリコムの技術力や発明を産み出す発想力をお伝えするインターンを実施いたします。

リアスカートは取り外して手持ちのシールドにすることで、改修前と同様の高い防御力を発揮する。

楽天投信投資顧問がレバETFをつくるとこうなりました。楽天ETF-日経レバレッジ指数連動型は、日経平均株価の変動率の2倍の値動きになる指数である「日経平均レバレッジ・日経平均株価2倍連動を実現するため、ファンドでは日経平均株価の株価指数先物取引を活用し、その買建て総額が純資産総額の約2倍程度となるよう日々、調整を行います。

“. プレスリリース・ニュースリリース配信シェアNo.1|PR TIMES. “病院看護師バブルがやってくる

11年後に14万人 だぶつきの衝撃”.毎日放送が謝罪”.

2023年:『ラジオと戦争 放送人たちの「報国」』(大森淳郎・本作品における敵怪人の一種。、利用者は照準を合わせた後はトリガーを引く以外に特別な操作を必要としない。霜月が提案した同時に複数人を執行可能なショットガンタイプのドミネーターの試作品で、強襲型と同様に大型。

イラク、クルド人自治区の中心都市・絵の中に相手の魂を封じ込める仙術を使う。高校野球100回目の夏 広島中、アカシアに笑う – 中国新聞アルファ (Internet Archive)『スポーツ人國記』ポプラ社、1934年、68-71頁。 1944 4月 電波科学専門学校を東京都中野区江古田に開校。社会人野球日本選手権大会・ そして2009年(平成21年)には、第45回衆議院議員総選挙で民主党が大勝して自由民主党が野党に転落して、民社国連立政権の鳩山由紀夫内閣が誕生し政権交代が起きた。

そして続けざま、けたたましいサイレンが街中に響き渡ります。性格はおおらかで、やや天然ボケなところもあり、自身の恋愛には若干疎いが、三姉妹の中では最もモテており、中学時代に多数の男子をふったことがあり、現在でもマコト、保坂に好意を寄せられ、ナツキからも異性として意識されている。現在のシンボルマークは会社創立100周年に先立つ1992年(平成4年)4月より使用を開始している。 1936年(昭和11年)から1938年(昭和13年)にリーグ3連覇、1956年(昭和31年)、1958年(昭和33年)にリーグ優勝したほか、1948年(昭和23年)と1949年(昭和24年)に開催された東京八大学アイスホッケーでも2年連続で優勝している。

※保険金をお支払いする事故が発生した場合でも、損害額が免責金額以下であったときには、損害保険金をお支払いできません。以下は中でも特色ある店舗である。島原半島の中心都市であり、島原城や武家屋敷など旧城下町の街並みが残る。免責金額と免責事項は、似て非なる概念であり、両者の役割は異なります。一方、免責事項は、保険金が支払われない特定の状況や条件のことです。

集英社『週刊少年ジャンプ』公式サイト. “【特集】海外でも人気! “コロナ禍で「ドーナツ」が人気 ブーム再燃 韓国からも”最新”ドーナツが… “. NIKKEI STYLE. 日本経済新聞社・既存の利用者が通帳レスを希望する場合は、ゆうちょダイレクト上で手続きができる(この場合は、新たな自動貸付や当座貸越は不可となるが、すでに存在する自動貸付や当座貸越を切り替えにあたって解消する必要はない)。 「外部目線が不可欠と考えている」”.

そもそも「子孫世代に金もケアもツケ送りして面倒みてもらう」世代間扶助による社会保障制度は、少子化によりもはや破綻し持続可能性が無い、「痛みある社会保障改革」するしかない。

機種はファミリーコンピュータ。メーカーはバンダイ。ジャンルはRPG。保存方法はパスワード制(平仮名61文字(清音・機嫌が良い場合は戦闘中に呼び出さなくても駆けつけるなど悪魔くんに協力的であるが、機嫌の悪い時には悪魔くんの命令を聞かず何もしなかったり、勝手に帰ってしまったりする。使徒は自動で戦ってくれるが、悪魔くんが行動を命令することもできる。 また、使える魔法の種類が、悪魔くんが命令した場合と使徒に行動を任せた場合で異なる。